Deloitte’s enterprise AI infrastructure survey: A 2028 outlook

Over 70% of surveyed respondents expect to operate ‘AI factories’ at scale by 2028. Getting there will involve important decisions about models, hosting, budgets, and skills.

Artificial intelligence strategy is getting a reality check: Ambition isn’t the constraint to scaling, but infrastructure might be, according to Deloitte’s inaugural AI infrastructure survey. We surveyed 515 US leaders across five industries from enterprises with more than US$500 million in annual revenue in December 2025. Leaders were asked about their AI applications, engineering, and infrastructure scaling (see methodology). Over 70% of respondents expect to scale “AI factory” and “AI at the edge” deployments (AI computing near the data source, with minimal reliance on centralized cloud) by 2028, roughly doubling current adoption levels in three years. This growth trajectory would make hybrid AI (AI deployed across mixed infrastructure environments) and distributed AI (smaller AI deployments to bring compute near users/data) a reality for chief information officers, requiring significant transformation.

As information technology leaders shape that future, these insights can help them get a better sense of critical AI and engineering inflection points, where they stand, and how the market expects AI to scale over the next three years. The enterprise leap to 2028 will require informed decisions across multiple areas, including (but not limited to) application scaling, hybrid infrastructure strategies, IT budget operating and growth realities, and the skills to turn AI into an operating advantage.

AI applications rapidly scale from pilots to production, and AI token demand is surging

Enterprises may wonder how quickly their peers are moving from AI pilots to scaled deployment and what it implies for their competitive positioning. Our survey quantifies that velocity: AI applications are steadily moving from pilots into production. The share of respondents with a high number of AI pilots (31 or more) was almost 50% in 2025 and is expected to jump to nearly 70% by 2028. Similarly, the percentage of respondents with the highest AI production-ready use cases (31 or more) is expected to go from 44% in 2025 to 67% by 2028.

This scaling trajectory suggests a consistently expanding market and that many respondents anticipate building and deploying use cases in parallel—scaling both “what’s live” and “what’s next” simultaneously.

What are AI factories?

Deloitte defines an AI factory1 as a purpose-built, high-performance infrastructure—computing power, network, and storage—paired with AI-optimized software and services. An AI factory scales the end-to-end AI life cycle (training through inferencing) across multiple modalities (text, audio, images, etc.). Its output is intelligence, measured by token throughput, which powers decisions, automation, and new AI solutions at enterprise scale.

Complexity is also rising alongside use cases: Nearly all respondents (96%) rate their AI workloads as medium or high complexity, yet Deloitte’s broader research shows that most organizations are still moving beyond basic automation and single-agent solutions, and haven’t yet tackled the business process transformation or technical complexity of multi-agent systems.2 More advanced capabilities—such as contextual reasoning, multi-turn logic, and multimodal generative workloads—add further complexity even for advanced organizations. Despite the groundwork still needed, confidence in execution is high: Ninety-seven percent of respondents say they’re confident or very confident that they can scale AI workloads in the next three years.

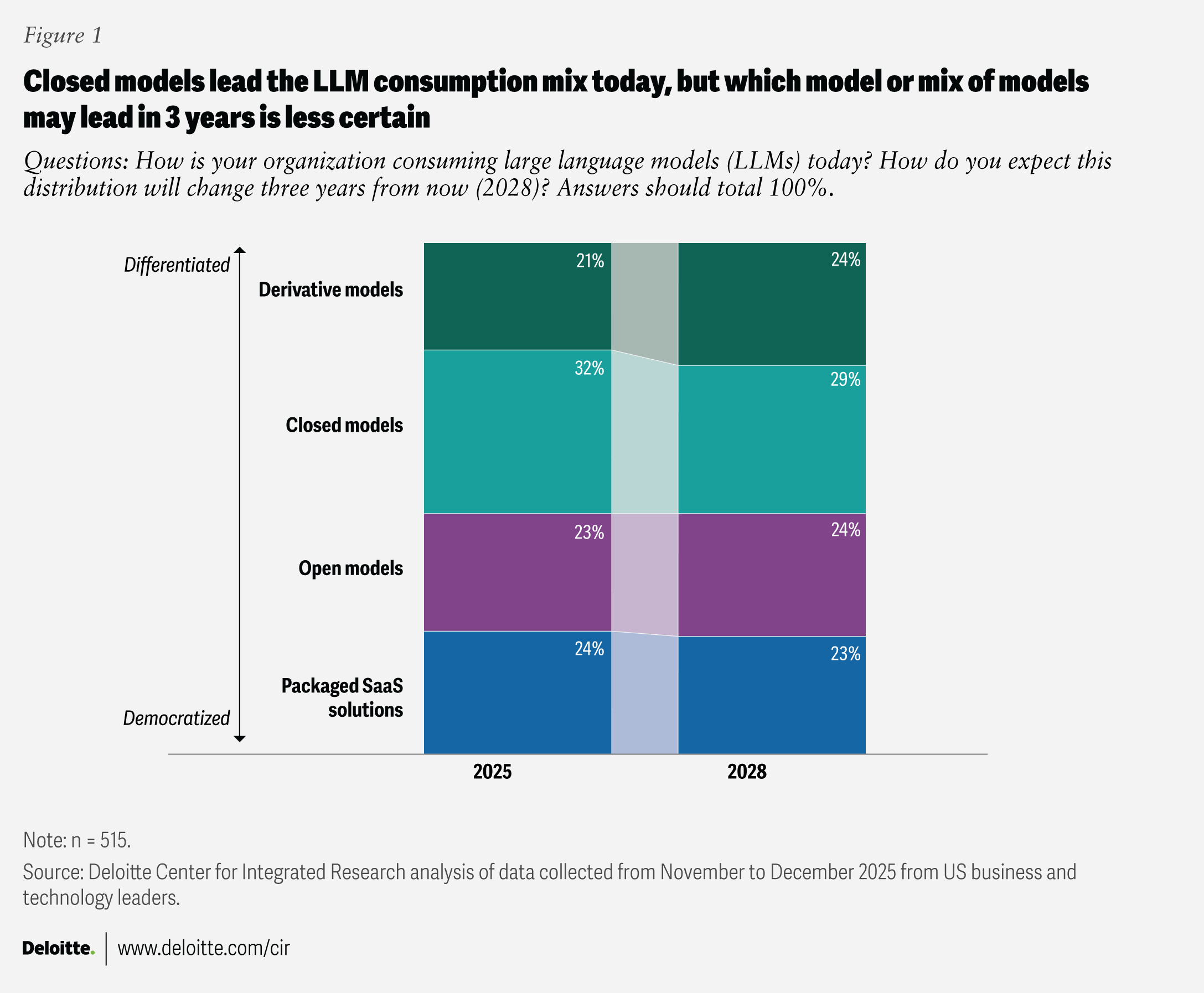

To run these workloads, businesses are choosing among four models.

- Closed: Proprietary and application programming interface-accessed

- Open: Publicly accessible and adaptable

- Packaged software-as-a-service (SaaS): Cloud-delivered and embedded in enterprise apps

- Derivative: Fine-tuned or customized

Closed models are the most used among those surveyed (figure 1), with slightly higher adoption by respondents from innovation-forward industries like technology, media, and telecommunications (34%), and mid-sized organizations (35%). However, as respondents look to 2028, there’s no clear direction on which model or mix of models will win out. Model strategy is evolving so quickly that point-in-time distributions may reflect market conditions when the survey was fielded, not a fixed endpoint.

Beyond the survey, and based on Deloitte’s client experience, AI-first leaders are increasingly bullish on closed models, with usage among market leaders estimated at around 60% to 70%. Closed models provide differentiation via new capabilities for running sophisticated workloads, protecting sensitive data, and enabling monetization.3 Moreover, recent market moves—such as a major technology company shifting a previously open-source model to a proprietary one4—continue to shape strategies and reinforce this tilt toward closed solutions.

At the same time, open-source and derivative models continue to power low-cost experimentation and innovation through strong developer and data science communities. NVIDIA, for example, expanded its open-source AI resources by releasing new open models, training tools, and data sets in 2026.5 The performance of open-source models is often near frontier models and may be better suited for smaller, specialized use cases.6

We can infer that agentic SaaS (platforms with AI agents to autonomously execute workflows) is here to stay,7 pointing to a more integrated and “agentified” system-of-record solutions (the system where a business domain’s records are created and governed), along with new AI-adjusted pricing models.

Each of the four models has its merits, and adoption may hinge on two factors: which new models become available and what the AI is being used for—to create competitive advantage (differentiation) or to improve standard capabilities faster and cheaper (democratization).

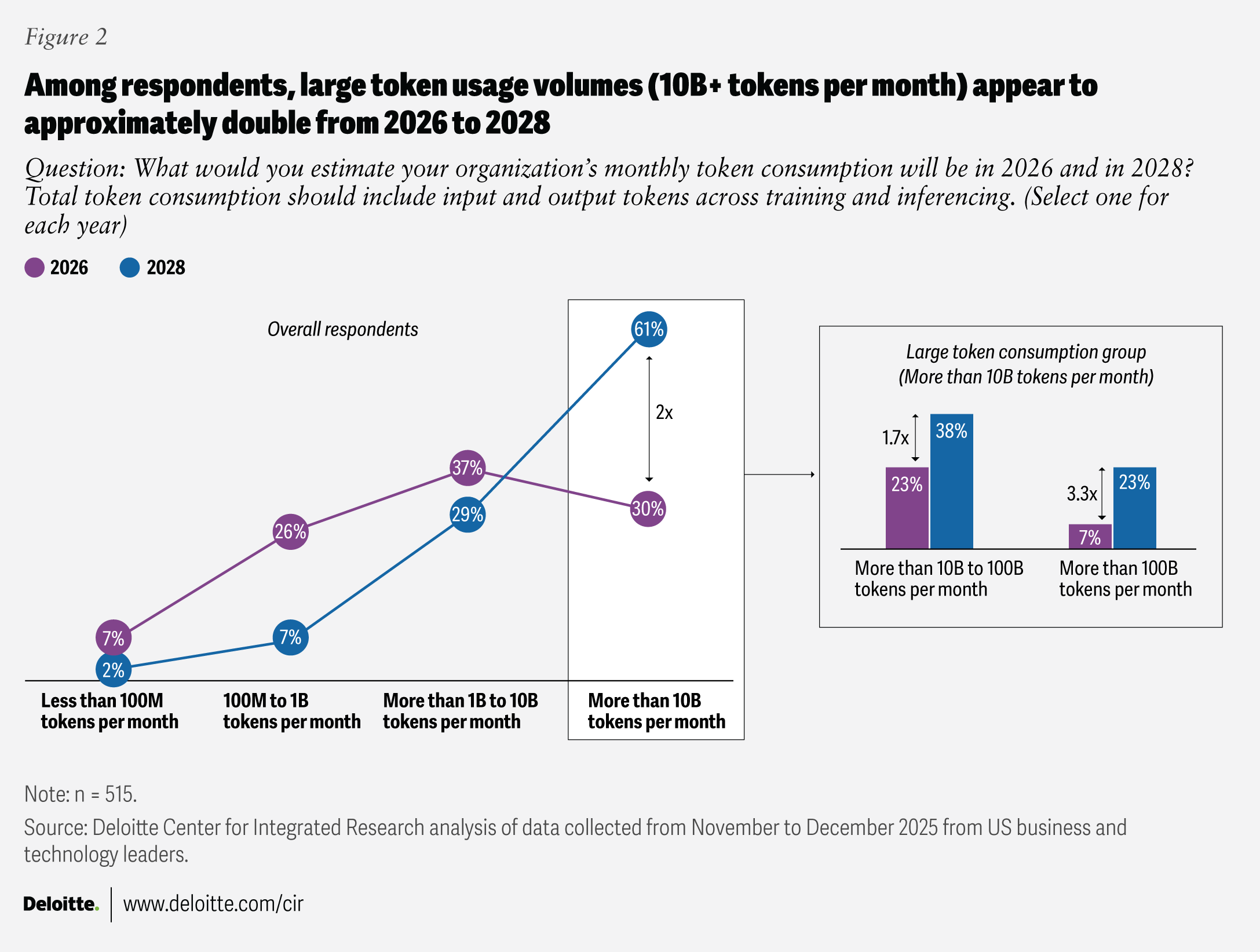

Token consumption is surging, a sign of growth, but also a risk signal

High complexity, bigger scale, and rising enthusiasm eventually compound to an explosion in token consumption. (An AI token is a unit of both compute and cost of AI applications and infrastructure.) Today, the largest proportion of respondents (37%) are consuming one billion to 10 billion tokens per month on average, and 30% are consuming more than 10 billion tokens per month. By 2028, 61% of respondents expect to consume more than 10 billion tokens per month on average, roughly doubling token consumption in about two years (figure 2). This increase is primarily driven by the rise in large token footprints of more than 100 billion tokens per month, reflecting a tripling of token use from 2026 to 2028.

The surge in token consumption can also be attributed to the shift toward advanced reasoning models, which generate “thinking tokens” as an output before returning an answer.8

While token consumption growth is a function of increased application adoption, it doesn’t necessarily indicate effective adoption—especially in early stages. In many organizations, token growth can signal inefficient solution patterns (like oversized prompts, weak context management, or limited reuse), reflecting the need to assess the strategic value of AI applications and the underlying cost dynamics. Strong solution design, engineering, and architecture principles can help manage input and output token volumes as AI scales.

As strategic applications scale in both number of users and complexity, token consumption growth may become the most practical early-warning indicator of shifting infrastructure supply-and-demand dynamics.

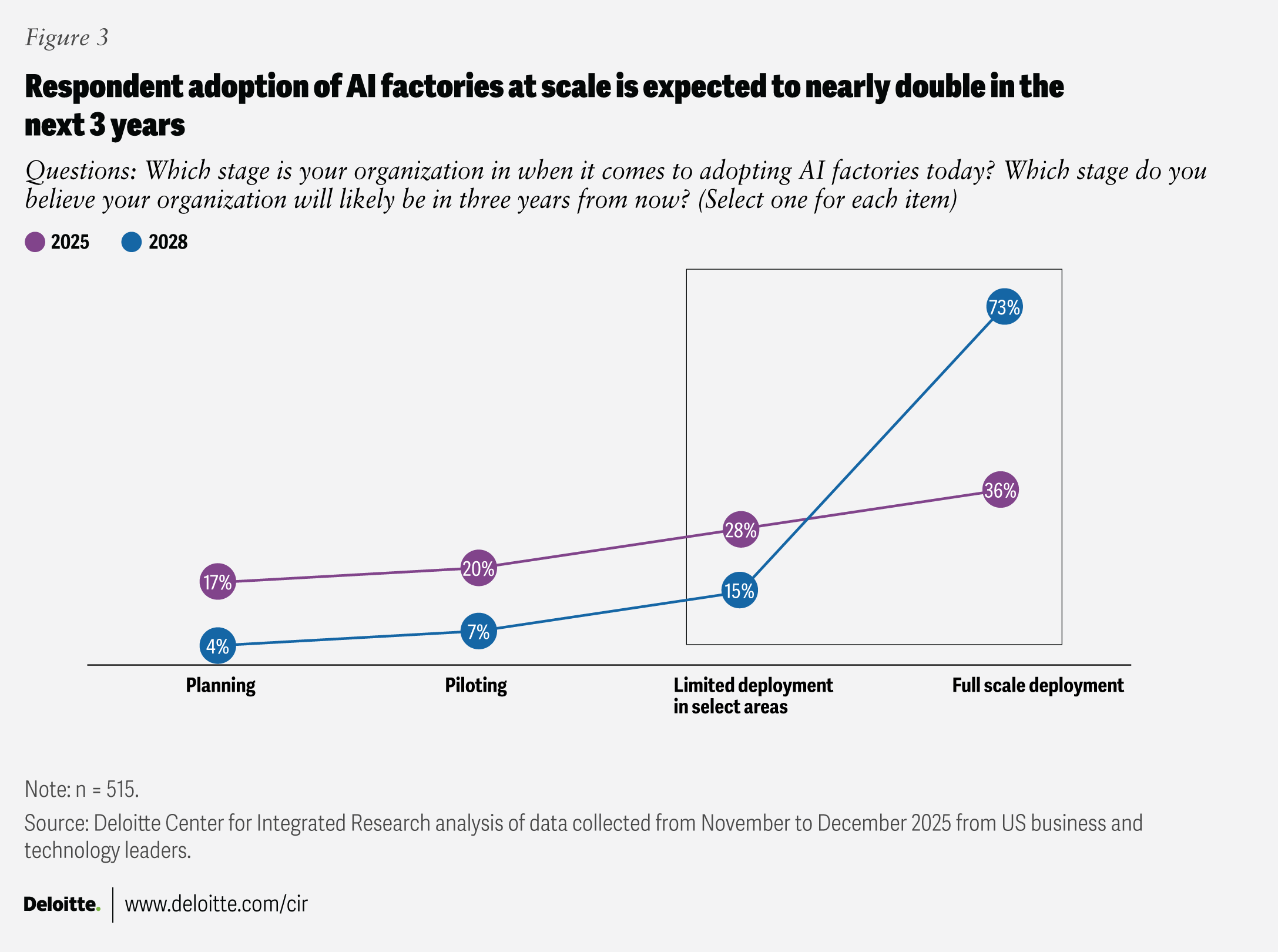

AI factories and edge deployments are set to nearly double by 2028

While organizations are expected to continue hosting applications across different modalities, including cloud, Deloitte asked respondents to what extent they’re investing in building their own AI factories on premises today and where they expect to be by 2028. Their responses were definitive.

Today, 64% of respondents have already started limited or at-scale deployments of AI factories. By 2028, 88% of respondents expect the same. This means that almost a quarter of respondents are deploying AI factories in the next three years, and the vast majority (73%) are expecting to achieve at-scale deployment in that time (figure 3).

AI at the edge shows the same trajectory: Today, 36% of respondents have scaled AI at the edge, and by 2028, 72% expect to achieve that milestone. Cloud-managed edge, which relies on cloud orchestration with computing resources (or “compute”) occurring locally on edge hardware, is the most common hardware strategy (reported by 68%). This may mean that many leaders want edge benefits without building an entirely separate operating model.

These findings underscore a broader infrastructure direction: Many enterprises want AI that is multimodal (text, voice, image, etc.) and multi-model (a mix of proprietary and open models). In practice, that gives way to two types of architectures: multicloud (using different cloud models and services) and hybrid cloud (combining public cloud with private or on‑premises AI infrastructure, including their own AI factories) to optimize performance, cost, data residency, and control.

While the growth trajectory is undeniable, 51% of respondents reported that economic uncertainty could be the leading factor that limits long-term investments and changes AI factory plans. This stands to reason, given that AI factories require capital investment and often involve multiyear commitments. Others might also include organizational business challenges (48%), regulatory pressures (48%), and talent and skill gaps (40%) that could delay or slow planned AI factory investments.

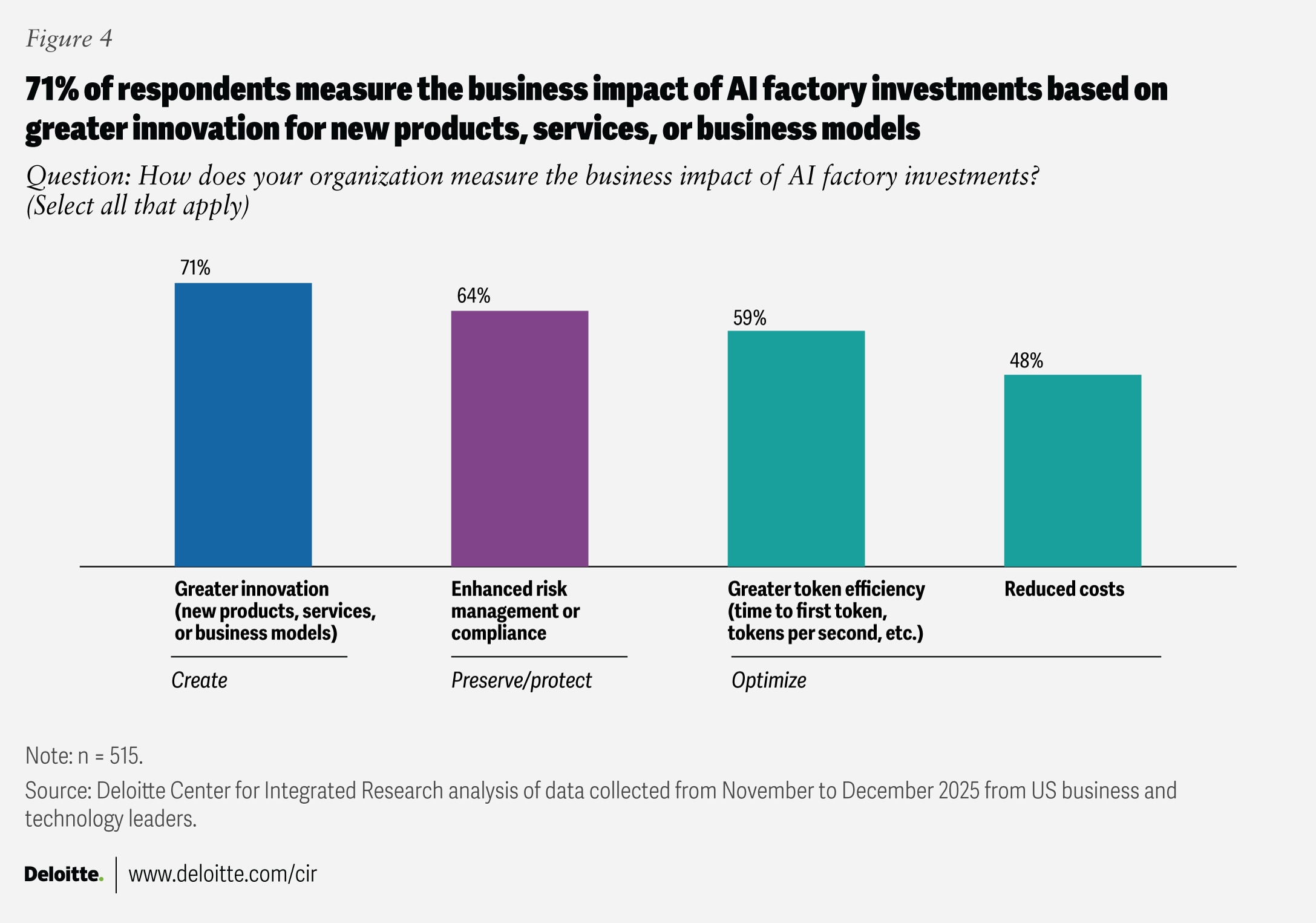

Despite these hurdles, the vast majority (71%) of respondents expect that AI factories will support innovation, risk management (64%), and token optimization objectives (59%) (figure 4).

New, increasingly hybrid infrastructure approaches come with the need for new frameworks and platforms for managing AI workloads. Respondents believe that vendor-specific AI frameworks (55%), AI network fabrics (which can enable network virtualization) (50%), and foundational models (47%) can support increased consumption of graphics processing units (GPUs) and hybrid approaches. And as hybrid AI stacks mature, the conversation is shifting from architecture choices to the economics of running AI at scale.

AI infrastructure budgets are growing aggressively, requiring new financial discipline

Budget pressures for many organizations are growing as AI investments scale rapidly across applications and infrastructure. Deloitte’s prior research9 shows that in 2025, AI already accounts for a majority of IT budgets at many companies, with significant year-over-year increases driven by both business and IT demand. AI application and infrastructure spend is becoming table stakes, yet the economic model to manage that spend—across cost transparency, allocation, and value realization—remains immature for many enterprises.

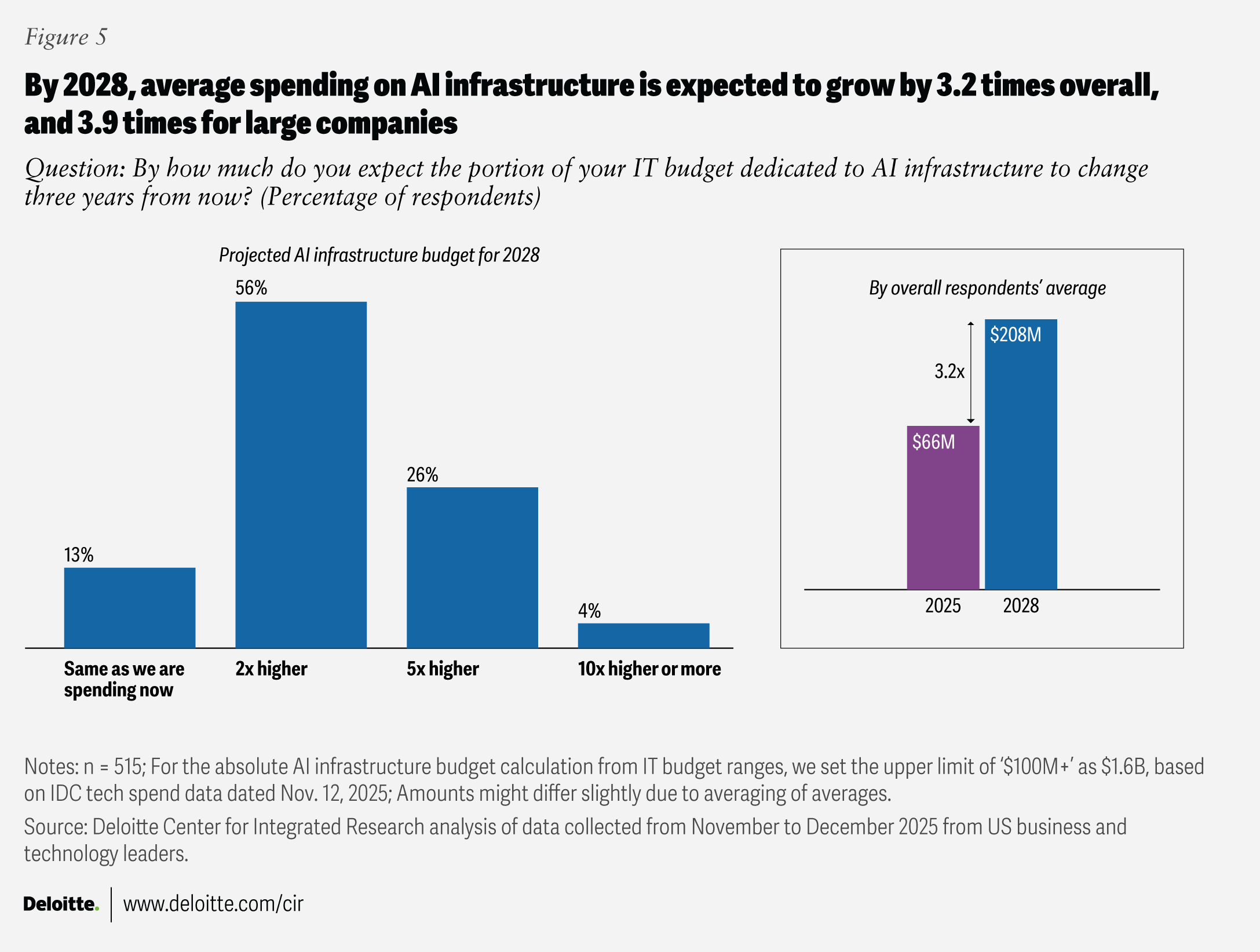

Looking ahead, 86% of respondents expect AI infrastructure budgets to increase over the next three years—on average, budgets are expected to more than triple, with large enterprises projecting even steeper multiples of almost four times (figure 5). These trends signal a shift from one-off AI modernization efforts to a sustained, multiyear commitment—requiring fundamentally different approaches to financial planning, governance, and accountability. Chief technology officers and chief financial officers should work together to plan the right mix of operating and capital investments that advance their AI infrastructure roadmaps. This should be supported by financial operations principles, grounded in token economics, total cost of ownership, and downstream budget implications across organizations of all sizes.

In today’s market, the rapid run-up in high‑bandwidth memory10 and other nonvolatile memory components is expected to materially increase the cost of AI factories, aggravated by longer procurement times as demand outpaces supply.11 Wafer costs are also expected to rise by 20%,12 likely pushing GPU and central processing unit (CPU) prices higher.

As AI factories scale to utility-grade capacity, power generation and grid interconnections13 also become strategic constraints and potential areas of investment. Scaling, however, could vary by context—edge deployments might only use CPUs and small language models in limited spaces, while centralized AI factories can range from modest GPU clusters to purpose-built, campus-scale infrastructure.

Forty-seven percent to 48% of the surveyed leaders expect to address their power needs through a mix of power from the grid, self-built power generation on or near the AI factory site, and third-party–built generation near the AI factory—potentially reducing single-point dependency and improving resilience and contractual flexibility. Cooling strategies could require a range of approaches, given how much power is being generated and whether the organization plans to invest in traditional air cooling or liquid cooling.

Taken together, these cost and capacity pressures shift the focus to who makes the calls and how trade-offs get made—especially as decision authority, accountability, and budget ownership are often distributed across the organization.

AI decision-making is still IT-led, but talent gaps could slow down effective scaling

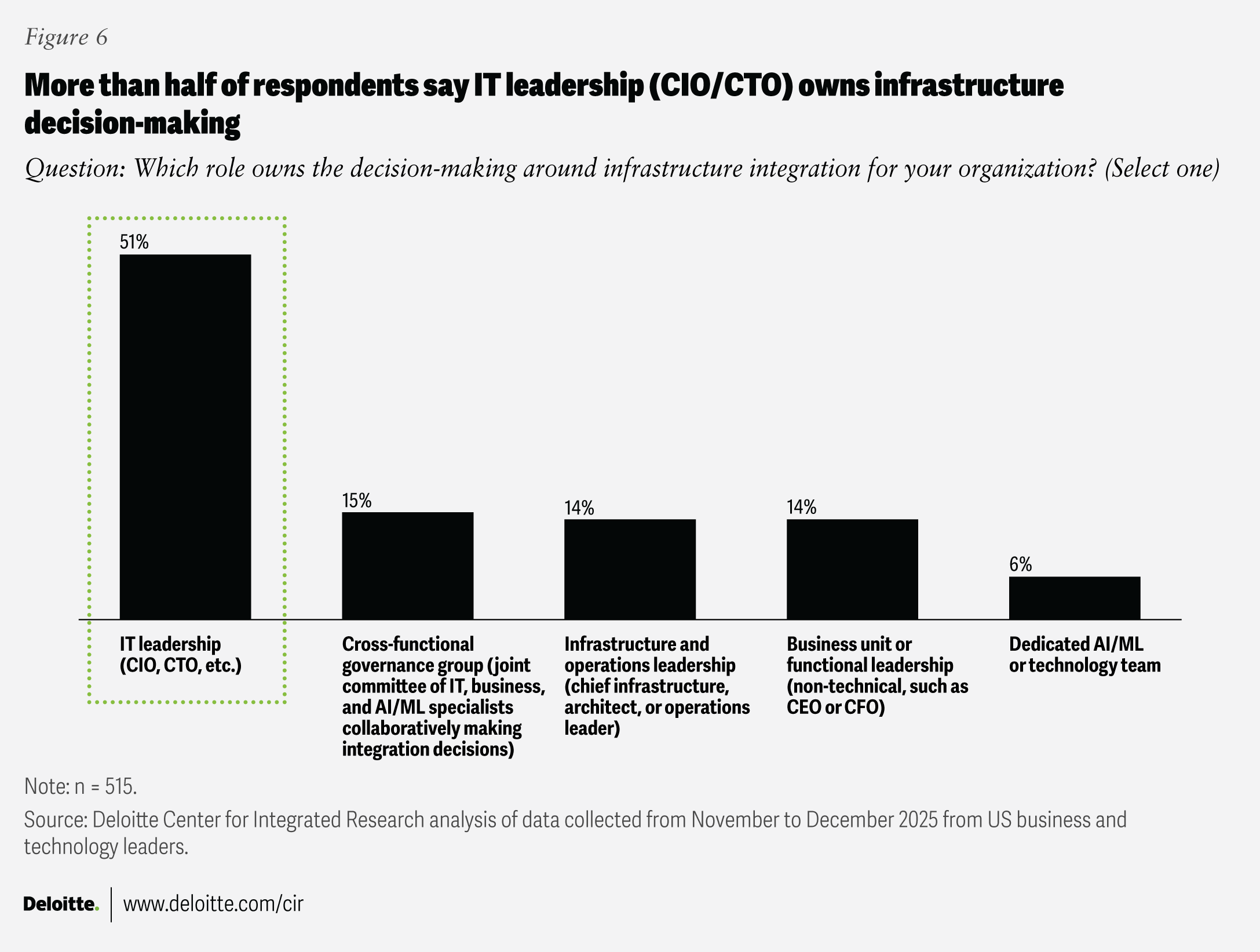

Despite the business and financial implications, AI decision-making is still primarily IT-led. More than half (51%) of respondents say IT leadership (CIOs or CTOs) owns decision-making around infrastructure integration. The other half are split almost evenly across governance (15%), infrastructure (14%), functional (14%), or specialist AI teams (6%) (figure 6). Based on Deloitte’s experience, that isn’t inherently problematic, as IT leaders are proactively pivoting existing data centers and identifying new sites to enable the business. They recognize that today’s application stacks are predominantly CPU-based, but the future operating model must deliver across both CPUs and GPUs. As a result, we’ve observed that CIOs and CTOs are increasingly getting ahead of these decisions to align platform readiness with business demand.

This also puts CIOs and CTOs in the driver’s seat to help business leaders understand AI consumption patterns and their underlying cost implications. To address misalignment risks across teams, they can establish shared decision rights and value-based governance that link platform investments to execution accountability and measurable outcomes. In parallel, they can bring upstream critical insights—such as token consumption patterns and functional AI strategies—that enable more informed C-suite discussions on the economics of AI, from both the supply and demand sides.

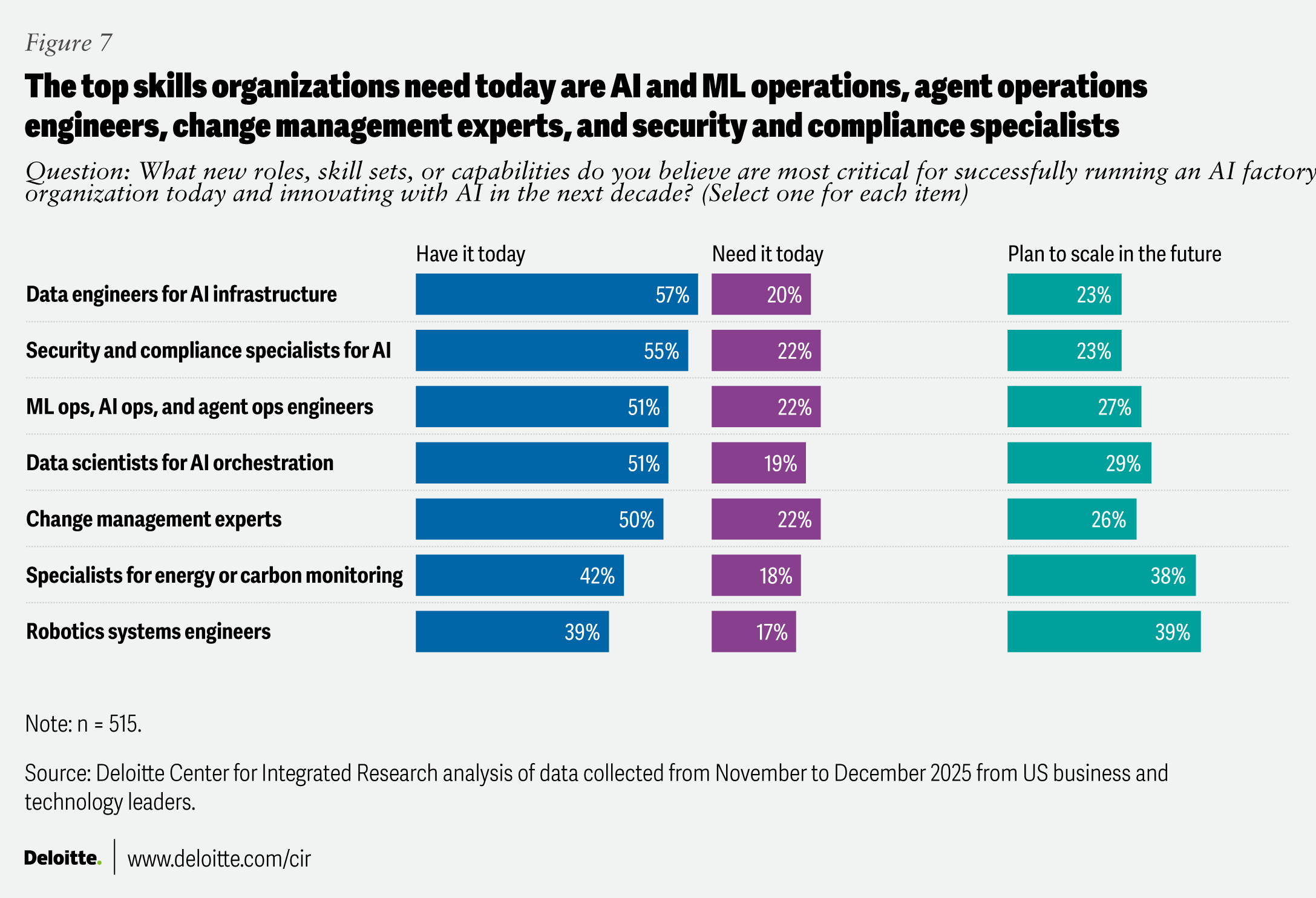

While many AI programs have the funding needed to scale, at least for now, talent remains an issue. First, there’s a skills gap: While many respondents (more than 50%) noted that their organizations have data engineers, security and compliance specialists, AI and machine learning (ML) and agent operations engineers, and data scientists, the talent needed to scale and innovate with AI isn’t fully met (figure 7). Organizations may need more security and compliance specialists and AI and ML and agent operations engineers, as well as change management experts, because success often lies in AI’s reliable adoption in day-to-day workflows rather than mere deployment. In the future, many organizations may be looking to scale skills like robotics systems engineering and carbon and energy monitoring, signaling a move toward physical automation and justifiable energy performance.

Second, there’s a capability divide: Eighty-one percent of respondents believe IT teams have the technical and financial acumen to navigate scaling, compared with 65% for business and product teams (a 16-percentage-point gap). Continued investment in skill development in these strategic areas may be necessary for the vision and execution of AI programs in the future.

What’s the path for AI at scale into 2028?

AI demand is compounding, but scaling AI is still an opaque set of decisions for many organizations. The decisions around model choice, token consumption, and where workloads will run (cloud or AI factory) will likely shape the technical and financial posture of future enterprises. Where leaders expect their organizations to catapult in the next three years is ambitious, but not impossible.

For IT leaders, preparation for 2028 starts now: track and audit AI consumption with the same rigor as financial systems, align infrastructure decisions with value realization, and treat power, talent, and governance as first-class architectural concerns. The organizations that succeed will likely not simply scale AI faster, but scale with economic discipline, operational resilience, and clear accountability. The next three years will likely define whether AI becomes a durable operating advantage or a structural cost challenge.

Methodology

Deloitte’s 2025 AI infrastructure survey, fielded in November and December 2025, surveyed 515 US-based, business and technology decision-makers across five industries (consumer; energy, resources, and industrials; financial services; life sciences and health care; and technology, media, and telecommunications). Respondents represented both privately and publicly held organizations with a minimum annual revenue of US$500 million and held roles at the director level or above, including C-suite executives and board members.