AI and the future of human decision making

As AI transforms decision making, how can organizations make quality decisions, anchored in human agency and trust?

A tech company launches an AI résumé screener meant to speed hiring, only to find it has been quietly learning past biases and rejecting qualified candidates. A retail service bot makes promises that the company doesn’t want to keep. Clinicians in a hospital lean on a condition-alert tool that speeds treatment but degrades their ability to spot nuances the model isn’t trained to detect. An industrial manufacturer puts AI on the board to surface risks; the directors learn it could be manipulated for personal agendas.

These stories are not science fiction. They can and are happening to organizations every day, and they raise important questions: What could have been done differently? How do organizations improve input and oversight when AI is involved in decisions? What is the best mix of AI and human in each decision, leveraging enough machine autonomy to improve speed, consistency, and scale while also maintaining sufficient human agency?

AI has the potential to transform human decision-making. However, organizations should first treat this process as a strategic discipline and then design human–machine decision-making relationships accordingly.

Do this well, and AI is more likely to sharpen human judgment, not crowd it out. Today’s cautionary tales can become tomorrow’s competitive advantage.

Running with scissors: AI and decision-making

Leaders today face a torrent of choices in conditions that are noisier, faster, and riskier than ever. Dashboards multiply and data streams expand, but leaders rarely stop to question where that information comes from or whether they can trust it.1 In a 2023 Oracle study, 85% of business leaders regret or question decisions they had made in the past. Additionally, 72% of those leaders say the volume of data and their lack of trust in data has stopped them from making any decision at all.2

Many are turning to AI as a solution. In our 2026 Global Human Capital Trends survey, 60% of executives now regularly use AI to support their decisions. Gartner projects that by 2027, half of business decisions will be augmented or automated by AI agents.3 Even boards are beginning to use AI to inform decisions.4

But AI use in decisions may be racing ahead of organizational oversight. As a fundamentally new technology, AI brings distinct challenges.

- Establishing clear chains of responsibility between decisions and consequences, which can be difficult with “black box” algorithms and the potential for biases or inaccuracies in model data5

- People feeling less ownership over AI-made decisions6 and becoming more likely to be dishonest when delegating decisions to AI7

- AI agents acting at vastly different speeds and scales from humans, blurring oversight and stressing traditional controls

- Managers not being prepared to be “supervisors” of AI,8 and many executives lacking sufficient AI literacy to contribute to oversight9

- Organizations lacking necessary ethical frameworks or struggling to translate them into practice10

- Insurance companies not wanting to cover corporate use of AI because of the scale and unpredictability of potential risks11

- Keeping up with complex and constantly changing regulatory requirements around AI.12

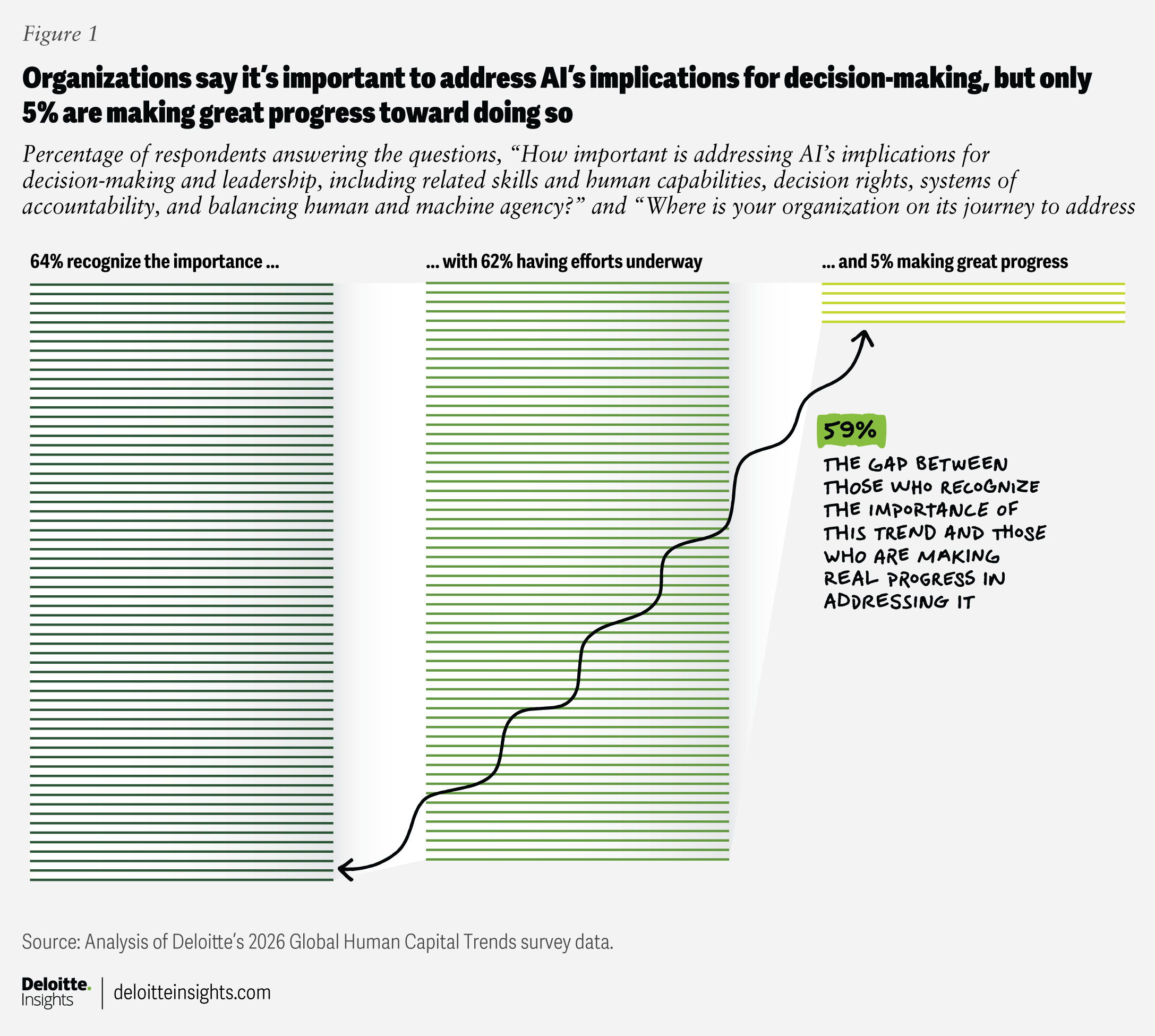

Our survey data suggests that the issue of AI and decision-making is still emerging despite the risks: Nearly two-thirds (64%) of respondents consider it very important to their current success, and a similar number are taking steps to address it. However, only 5% consider themselves to be leading the way (figure 1).

As organizations expand AI-enabled decision-making, many find AI to be amplifying existing deficiencies instead of solving them. Deloitte’s High Impact Decision Intelligence research has found that high-quality decision-making is a discipline that can be learned, improved, and scaled. Yet, more than half of organizations in that study (57%) operate at low decision-making maturity, with few teaching decision skills or providing the necessary tools to support decision-making.13 High-maturity organizations are far more likely to do both and to make decision strategies explicit.14

AI is reshaping organizational decisions, whether organizations are ready or not. To strengthen decision quality and mitigate risks, organizations should first hone decision-making as a discrete capability and then intentionally design how humans and AI interact as deciders.

Elevating the discipline of decision-making

AI-enabled or not, organizations that practice decision-making as a rigorous discipline consistently outperform peers.15 Simply thinking about decisions as a capability is a good start.

Organizations can use decision frameworks to classify choices and pre-assign owners, data, guardrails, and speed for each category (figure 2). Amazon’s one-way vs. two-way door model provides a simple example: Decisions that are difficult or impossible to reverse—one-way doors—require methodical decision-making, while easily reversible decisions—two-way doors—can be made quickly.16 This framework helps teams make reversible bets quickly, while giving irreversible moves higher scrutiny.

Figure 2

Elements of a decision framework

${optionalText}

Design for decision rigor and integrity

Most organizations still treat decisions as by-products of meetings and dashboards rather than worthy of explicit design and focus. To add rigor and integrity, consider the following:

- Surface the decisions that matter. Decision rigor and integrity start with surfacing the important decisions, clarifying owners and inputs, and making those structures explicit in workflows. The Massachusetts Institute of Technology’s concept of intelligent choice architectures is useful here: Instead of trying to predict a single “right” answer, leaders should intentionally shape the environment in which decisions happen so better choices are easier and more reliable to make.17

- Encourage sound decision basis and culture. A strong decision basis (that is, data and hypotheses) is important to decision-making hygiene, and even more so with AI. Define what constitutes fit-for-decision evidence and how it will be used, in advance of using AI. Build norms that value candor, evidence, and decision quality over politics or hierarchy.

Design for decision rights and governance

Deloitte research in organization design found that a surprising number of organizations lack clarity about decision rights.18 AI will likely increase the pressure here and muddy decision rights further. To achieve clarity, organizations can take the following actions:

- Modernize decision rights for AI. Most organizations have already acknowledged that humans need to be on the loop when it comes to AI making decisions—having humans oversee the results. They are also working to involve humans in the loop by ensuring humans work iteratively with AI, passing work back and forth. In defining who does what, legacy, person-centric decision-rights models (for example, the classic RACI project management tool with four key responsibilities: responsible, accountable, consulted, and informed) can be a first step.19 The challenge is that they presume static authority. With AI, rights need to be more dynamic, incorporating override privileges, escalation paths, and consensus rules engineered into the system so humans and agents coordinate who decides, when, and on what basis.

Atlassian, for example, recognized that unclear boundaries between AI-led and human-led decisions were creating bottlenecks. Instead of creating a rigid rulebook, they treat decision rights as something that evolves, regularly revisiting where AI should handle routine tasks and where humans need to step in for higher-risk calls. Teams gain a clear understanding of what’s automated and what requires human judgment. This transparent approach builds trust.20

- Modernize governance for AI. AI’s role in decisions should find its way onto the agendas of existing governance bodies. AI can introduce board-level concerns like reputational and financial risks. Yet, Deloitte research on AI and boards finds engagement is, so far, limited. According to the study conducted in 2024, nearly half (45%) of surveyed board members and C-suite executives said AI is not on the agenda, and only 14% discussed it every meeting.21 In an updated survey in 2025, 40% say AI has caused them to think differently about their boards’ makeup, recognizing the need for change.22

IBM puts AI decision governance into practice through dedicated ethics boards that review high-impact uses and are guided by the company’s published principles for trust and transparency. Its approach blends policy, cross-disciplinary review, and tools that track compliance and help teams act with confidence. By treating governance as a design system—not just a control—IBM is able to scale AI while preserving trust.23

As AI rapidly evolves, so, too, does global regulation. Regulatory frameworks like the European Union AI Act24 are requiring boards to establish clear AI oversight mechanisms and evidence trails well before enforcement deadlines. Organizations should start by understanding the strategic impacts of regulations and creating clarity around the operational challenges.

Elevating both humans and machines as decision makers

Even the best decision processes can fail if the decision-makers—people and AI—aren’t prepared, supported, and evaluated. Organizations should design for the decision-making competence they need: building human decision-making skill and measuring AI performance with rigor befitting their mission-critical contributions.

Design to grow decision-making skills

There’s an irony: Many organizations teach AI how to decide while assuming humans already know how. These practices can help:

- Treat decision-making as a critical foundation of leadership development efforts. AI-enabled decision-making is rising to the top of what defines a modern leader, and it’s teachable. In leadership development programs, for example, leaders can learn how to build and test hypotheses to inform decisions and pair it with data fluency so leaders can interrogate uncertainty with data.

To accelerate frontline judgment, BAE Systems rolled out a case-based learning program that places leaders in realistic, high-ambiguity scenarios. Teams practice making decisions under pressure, testing what information to trust and how to frame hypotheses, then debrief against clear criteria. The repetition builds rigor without slowing pace, and early feedback shows better cross-functional coordination and faster, more confident decisions.25

- Train managers to manage AI. Management skills now include managing machines as well as people: scoping agent autonomy, judging model outputs, and knowing when to override are likely new skills for most.26 This issue is likely to get more difficult as organizations flatten and increase spans of control.27

DBS Bank reinforces decision integrity through its PURE principles—purposeful, unsurprising, respectful, explainable—and a responsible AI/data use framework overseen by senior committees. These standards guide employee-facing tools like iGrow, an AI platform used by most staff, which helps leaders make transparent, data-informed choices about learning and mobility. By pairing clear frameworks with governance and training, DBS speeds everyday decisions while maintaining strong human oversight.28

Design to evaluate AI performance

AI’s role in decision-making requires explicit evaluation, including quality criteria, regular retraining, and fit-for-risk oversight. This is not a new version of employee performance management. AI evaluation is a growing discipline requiring its own expertise and scale, which can be built through the following practices.

- Monitor and evaluate AI behavior continuously. Use processes and standards to track AI model performance, fairness, and reliability after deployment. Emerging human-AI collaboration research argues for tracking human outcomes such as the degree to which interaction supports trust and healthy judgment, not just model accuracy.29 Spotify, for example, relied on humans to define the standards for evaluating a high-quality podcast summary, then used those to inform automated evaluation.30

- Create anchors for accountability. Build quality checkpoints into workflows—risk thresholds that trigger human review, and logs of all human-AI disagreements—so choices can be traced and learned from over time. It is essential to understand and record AI agent steps and reasoning, and to maintain audit trails, especially for more autonomous systems.

- Be aware of cognitive asymmetry. Humans are physiologically wired not to notice everything around them. Pair that fact with AI’s expanded scale and speed, and it’s likely that humans will not always be the best monitors of what can be opaque systems. The European Union’s TechDispatch on human oversight cautions against assuming that a human-in-the-loop ensures safety; organizations should explicitly design authority, interfaces, and escalation so humans can effectively intervene.31 For example, some enterprises are introducing “guardian agents”—specialized agents that watch, test, and gate other agents to keep autonomy within bounds.32

Elevating human agency in the human and machine decision-making relationship

Human agency underpins the degree to which individuals feel influence and responsibility for events around them. People more readily accept responsibility when they feel real influence.33

Design for human agency

Leaders should be intentional about supporting human agency and building trust as humans and machines interact to make decisions, including these practices:

- Build accountability through human agency. Clearly connect choices to choosers, giving decision-makers confidence grounded in comprehension (not mere compliance). Liberty Mutual Insurance enables claims adjusters to explore scenarios using AI but with the ability to override AI’s suggestions. As one leader told MIT Sloan Management Review, “The moment AI enters the workflow, the real question isn’t ‘What does the model say?’ It’s ‘Who gets to disagree with it, and how fast?’”34

- Determine the level of AI agency based on desired outcomes. Design how much autonomy AI agents have based on the risk profile of the decision and the human and business outcomes desired. Researchers at Stanford recently introduced an auditing framework that matches the level of AI agent autonomy to the degree of human agency desired. When the work is mission-critical or ambiguous, greater human agency is needed. However, for well-defined and low-risk tasks, decisions can more easily be shifted to fully autonomous agents. This taxonomy helps calibrate oversight while preserving the human capacity to intervene where stakes or uncertainty are high.35

Design to build trust in AI

Trust is an essential element of human collaboration. It is also essential for humans working with technology such as AI that interacts and evolves. Deloitte’s Trustworthy AI research shows that workers who trust the AI agents they work with are 10 times more likely to see those agents as critical to creating value.36

People extend trust to technology when it consistently demonstrates reliability, capability, transparency, and humanity.37 Our recommendations given in this chapter address these four factors and are likely to lead to greater trust.

Humans can confidently collaborate with AI and accept responsibility for the results when they know how the decision was made and how they materially influenced it. Trust rises when AI is used where people welcome it and is constrained where they don’t. Many users want AI to play some role in analytical, high-stakes domains (for example, fraud detection, weather forecasting, and drug discovery) but little or no role in more personal or value-laden decisions.38

Decision-making that works for humans and machines

Organizations that elevate decision-making as a discipline, improve decision-making skills, evaluate AI’s involvement in decisions, and design for human agency in decision-making can gain speed and quality without sacrificing trust. Those who don’t could risk opaque choices, diluted accountability, and the slow erosion of human agency at precisely the moment when clarity matters most.

Evidence suggests the upside is meaningful: Technology can accelerate analysis and clarify uncertainty, but it cannot replace human purpose, values, and judgment behind choices. This is the path to AI as a trusted adviser—improving the speed, scale, and quality of decisions while keeping humans firmly in charge of the “why.”

Methodology

Deloitte’s 2026 Global Human Capital Trends worked in collaboration with Oxford Economics to survey more than 9,000 business and human resources leaders across many industries and sectors in 89 countries. In addition to the broad, global survey that provides the foundational data for the Global Human Capital Trends report, Deloitte supplemented its research with worker-, manager-, and executive-specific surveys to uncover where there may be gaps between leader and manager perception and worker realities. The survey data is complemented by more than 50 interviews with executives and subject matter experts from some of today's leading organizations. These insights helped shape the trends in this report.