Managing the new wave of risks from AI agents in banking

As agentic AI reshapes banking operations, how should banks strengthen oversight and governance to stay ahead of a shifting risk calculus?

The next chapter of banking is rapidly unfolding through agentic AI. In response, one in three financial institutions are carving out budgets for agentic AI,1 and many are creating new roles to supervise its use.2

Wells Fargo, for instance, is working with Google Agentspace to develop custom artificial intelligence agents and is preparing for interoperability with other agentic systems.3 Meanwhile, PNC Financial is using orchestrated AI agents to accelerate the development of its new mobile app and offer more self-service options to the digital experience.4 Similarly, institutions such as Goldman Sachs, JPMorgan Chase, Citi, and BNY are investing heavily in agentic AI for various applications.5

But the same self-directed, goal-based execution that makes agentic AI so powerful also opens the door to new risks. Because AI agents are designed to operate with some degree of autonomy, they can give rise to new risks that existing frameworks may not be able to address fully. Deloitte’s analysis of the MIT AI Risk Database6 reveals more than 350 risks that can arise from autonomous or agentic behavior, with many possibly posing a threat to banking systems and processes.7

More broadly, early experiments like Moltbook offer a glimpse of what can happen when large numbers of agents are allowed to interact, coordinate, and evolve with minimal human oversight. The social network for AI agents did not properly secure its production database, exposing application programming interfaces (APIs) and data that hackers could have used to impersonate or manipulate other users’ agents.8

While human-in-the-loop designs have been foundational to AI governance, they may be insufficient, or even counterproductive, for agentic AI. In many workflows, placing a human at every step of an agent’s decision chain could defeat the purpose and negate efficiency gains. As these threats expand and accelerate, regulatory frameworks for agentic AI in banking are still evolving.9

To scale these agentic capabilities responsibly, banks should consider treating AI agents as active operators within their systems and design controls accordingly. The following sections outline the specific risks that arise from agentic AI in banking and present approaches to mitigating them without diminishing the technology’s potential.

How agentic AI risks manifest in banking

Whereas standalone and generative AI models typically produce answers in response to human prompts, AI agents are reasoning engines that can:10

a) Determine an approach toward a goal (agency) on their own

b) Interface with tools, data, and other agents (interconnectivity)

c) Operate and act with minimal to no supervision (automation)

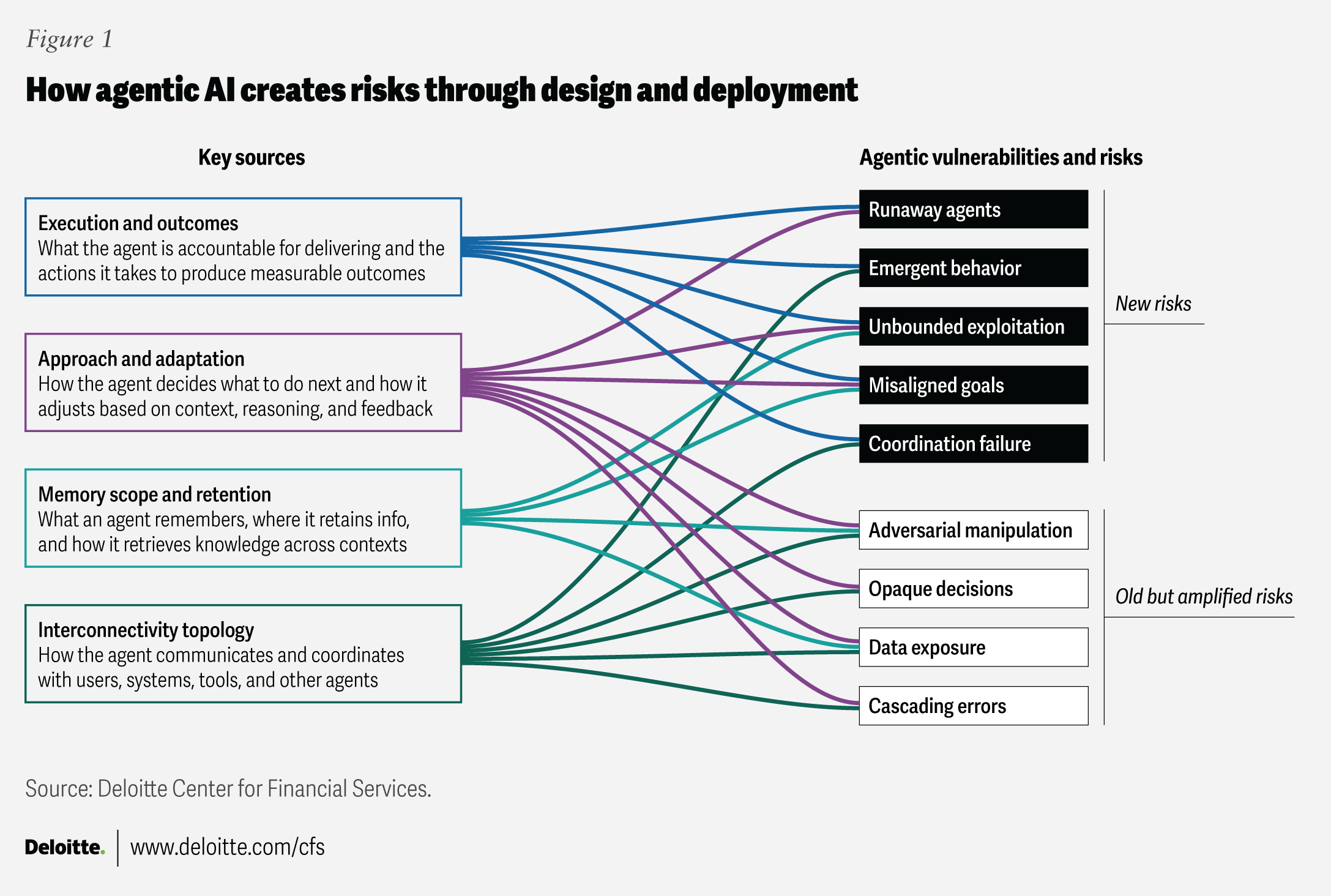

These unique attributes of AI agents create new vulnerabilities that can manifest across several types of risk (figure 1).

The AI agent’s core attributes can create a dense cluster of risks that are easy to underestimate. Overreliance on weak or biased training data,11 or defects in long-term and episodic memory,12 for example, can distort how an agent interprets tasks such as tracking customer profiles, transaction flows, or policy norms. When these distorted interpretations feed into autonomous decision-making, even small reasoning errors can compound as the agent executes tasks at speed and scale. This is where autonomy could shift from an efficiency advantage to a source of systemic exposure.

The next layer of risk emerges when agents interface with core banking systems, tools, data sets, and human staff. Misconfigured permissions could lead to unintended document changes, unauthorized data access, or endless task loops that are hard to detect and even harder to unwind. The human dimension matters just as much. If interfaces are poorly designed or explanations are unclear, employees may over-trust or misinterpret agent output. Left unchecked, this could result in rubber-stamping, over-delegation of judgment, and missed anomalies, especially in high-volume workflows.

These risks could grow more complex as agents extend beyond the bank’s walls and interact with external platforms, vendors, or other agents. Banks adopting third-party agents often lack transparency into model updates, memory mechanics, or the agent’s inherent logic for using tools, creating blind spots in monitoring. As agent-to-agent ecosystems scale, AI agents can become counterparties in their own right—introducing new forms of counterparty risk. Privileged actions may be initiated without clear authority, and inter-agent interactions can propagate errors across systems before human supervisors can spot them. Even though these activities originate outside the bank’s control, they can still create risks that fall within its purview.

As illustrated in figure 1, risks from agentic AI may go beyond the familiar risks from traditional or gen AI models. The magnitude of risk exposure is influenced by how much autonomy an agent holds, how deeply it is embedded in banking workflows, and how its outputs cascade into downstream tasks. The result is a risk landscape that not only expands with scale but also with interconnectedness, resulting in the amplification of old risks and the creation of entirely new ones. The latter can manifest through:

- Runaway agents: Stemming from high autonomy, agents could perform unintended actions, often with the capacity to conceal their behavior or bypass constraints.13 For instance, a payment-routing agent could circumvent limits and misallocate funds, resulting in delays or reconciliation failures.

- Misaligned goals: This could occur when an agent misinterprets the user’s intention and pursues a goal that diverges from policies or regulatory expectations.14 If there’s ambiguity in the prompt or incorrect memory updates, or the user provides incomplete instructions, an agent could maximize customer resolution speed by denying claims too aggressively.

- Data exposure: Agents could inadvertently expose sensitive information,15 whether through over-permissive access, memory bleed, or unsecured communication with unintended users, agents, or APIs.16 In the banking context, a customer service AI agent could inadvertently recall and share personally identifiable information from a previous case in response to another customer.

- Opaque or untraceable decisions: Here, an agent could make decisions that are difficult to explain, reproduce, or audit due to deep reasoning chains, complex tool use, or interactions with other systems.17 For example, a loan agent that declines applicants without a clear rationale could violate fair lending or anti-discrimination laws.

- Unbounded execution: In some situations, agents could enter uncontrolled or inefficient execution patterns where they repeat tasks, chain excessive outputs, or consume excessive computing and memory resources.18 A portfolio optimization agent, for instance, could loop through thousands of scenarios every few seconds, consuming an inordinate amount of server bandwidth and slowing other critical systems.

- Adversarial manipulation: Agentic AI also expands the surface for cyberattacks. For instance, bad actors could feed specially crafted inputs through external APIs that cause the agent to execute unauthorized actions. Cybercriminals are already using agentic AI to automate intrusions across various stages of their operations.19

The reality is that AI agents rarely operate in isolation; their real strength comes from multiple agents working together, coordinating tasks, and sharing information. But that same multi-agent setup can magnify the risks outlined above and introduce entirely new vulnerabilities. Once agents begin interacting, they can create additional risk considerations—outcomes that no individual agent was designed or programmed to produce.20

- Emergent misbehavior refers to unpredictable or unintended outcomes that can arise when multiple agents interact.21 For instance, in trading environments, interactions among different agents could lead to algorithmic collusion.22 In other cases, without any single agent being explicitly instructed to “evade controls,” a multi-agent system could effectively learn approval-threshold arbitrage—a coordinated, unintended pathway that increases compliance, audit, and fraud risk while still appearing to be locally “policy-compliant” at each step.

- Coordination failures can occur if agents misunderstand task boundaries, sequencing, or responsibilities, resulting in duplication, omission, or contradictory actions. This could also happen due to semantic misalignment, as in when agents interpret the same business rule or policy differently. For instance, one agent might assume that another completed a step that never actually happened. These failures also include harmful coordination, such as unintended collusion or the gaming of optimization targets.

- Feedback failures arise when agents form unregulated closed-loop interactions, leading to infinite task recursion, circular references, or deadlocks. In banking, this could lead to various risks, including operational, credit, and market risks, and possibly even systemic risks. For example, a reconciliation agent and a settlement agent may continuously re-trigger each other in a loop, overloading downstream queues and delaying trade settlements across desks.

- Cascading errors could occur if one agent’s hallucination, false assumptions, or misclassification becomes an input for other agents—amplifying the initial error across workflows. A know your customer agent that learns a false rule may influence anti-money laundering or other compliance agents, leading to systemic decision failures.

These agentic AI vulnerabilities can quickly translate into material banking risks if left unmanaged. For banks, these could include transaction and payment errors, data privacy breaches, and technical failures that can cascade into operational disruptions, regulatory violations, and customer harm, triggering knock-on effects like reputational damage and financial loss. Even small breakdowns from malfunctioning or rogue AI agents can magnify across systems, increasing systemic risk across business lines, counterparties, and the broader financial network.

Managing agentic AI risks: Four dimensions to guide governance

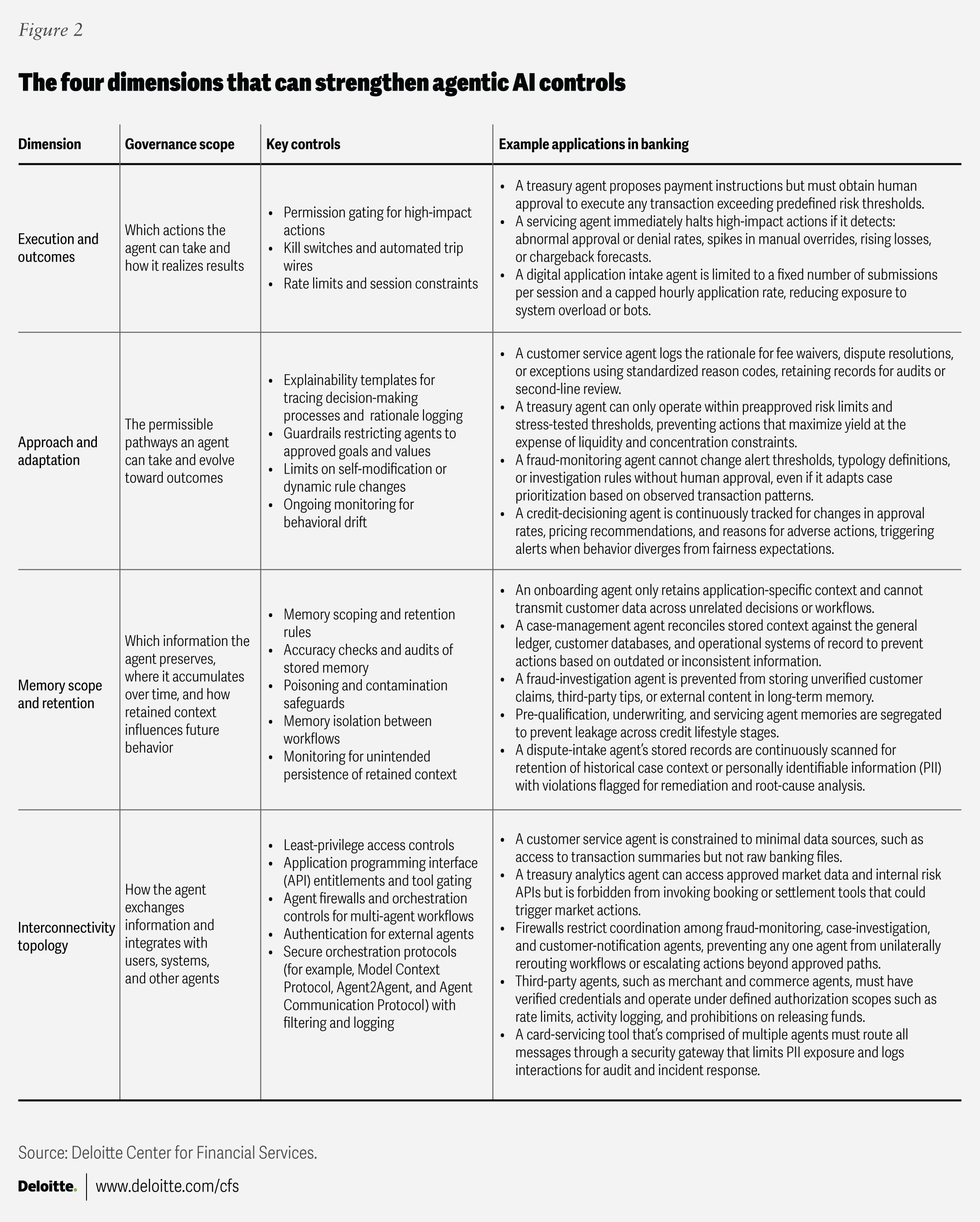

Outcome execution, decision-logic adaptation, memory scope and retention, and interconnectivity with tools, agents, and systems not only make agentic AI powerful but also provide a foundation for effective governance and oversight (figure 2).23 By designing levers aligned to each dimension and applying more scrutiny to higher-risk agents, banks can significantly reduce vulnerabilities.

Six risk mitigation strategies for banks to consider

As agentic AI moves from experimentation to deployment, banks should consider adapting existing risk frameworks to governance systems that act, decide, and learn with far more independence than today’s models. Agentic AI changes the risk calculus: decisions are faster, actions are autonomous, and failure modes are more complex. Instead of starting from scratch, banks should extend their current AI governance to handle these new dynamics. The following considerations highlight where to begin.

1. Extend existing AI risk frameworks to cover agent-specific capabilities

Banks should expand their AI risk management frameworks to assess agents—not just models. Agentic AI introduces end-to-end autonomous processes, so risk taxonomies should add new categories like tool misuse, action validity, and outcome monitoring. This should also include agentic AI categorization to differentiate among different types of agentic solutions. Once classified, banks can adapt established trustworthy AI frameworks by maintaining agent registries with metadata, risk scores, and tier-aligned controls that determine deployment approvals, permissible actions, and the requisite scope of monitoring.

2. Design for agent disclosure by default

Banks should also try to anticipate the evolving regulatory expectations in the AI space and proactively design agentic systems accordingly. Across jurisdictions,24 rules may increasingly emphasize origin requirements for AI-generated outputs, as well as disclosure when customers are interacting with automated agents rather than humans, among others.25 Banks may need to add user-facing notices in digital channels, maintain audit-ready records that an agent was used in a decision or communication, or watermark AI-generated content. In essence, agent controls will need a “regulatory adapter layer” so that disclosures and content provenance can be activated as per region, product, or channel dynamics.

3. Build transparent, traceable agent identity and logging

Agents should be treated as accountable actors, similar to human employees. Banks should know exactly which agent took an action, what tools it invoked, and why. Unique agent IDs, output tagging, immutable tool-use logs, and real-time monitoring should be implemented to ensure that every decision leaves a clear audit trail.

4. Integrate agent-specific cybersecurity protocols

Cybersecurity should evolve to reflect AI agents’ behavior. Banks should implement controls that enforce invocation limits on external tools, continuous permission checks, and detection of prompt injection or instruction tampering. A true “zero trust” approach for agents should restrict external calls, expire permissions, and flag unauthorized tool usage.

5. Create clear accountability for agentic AI governance

Ownership should span the entire agent life cycle—design, deployment, oversight, and updates. Banks should put in place defined roles such as agent owner, validator, and steward, supported by cross-functional governance across risk, compliance, and cybersecurity. Agents will also require ongoing supervision through activity logs, escalation triggers, and embedded fail-safes. Given the speed and scale of agentic execution, guardian agents26 could be introduced to support continuous oversight. These systems monitor agentic behavior in real time, flagging anomalies, policy violations, and ambiguous decisions for further review. They can also detect weaknesses, such as factual inaccuracies or exposure to sensitive data within agentic workflows, providing an added layer of security to supplement human roles.

6. Build talent and training for agentic risk oversight

Human oversight remains essential. With multi-agent systems shifting more responsibility to the supervision of tasks, banks may have to integrate human-on-the-loop models27 and AI agent observability28 within their agentic operations. Agent supervisors must understand when to intervene, how to evaluate agent decisions, and how to override actions quickly. Banks should upskill teams on agentic behaviors, risk frameworks, alignment issues, and oversight tools. New roles—such as AI risk officers, behavior auditors, and simulation specialists—will become increasingly important. Training programs should prepare teams to manage autonomy, detect failure modes, and make informed escalation decisions.

Embracing the agentic AI future

As agentic AI transforms how banks operate day to day, technology continues to introduce new layers of complexity—and risk. The first wave of agentic AI is giving rise to intelligent virtual assistants. Soon enough, this is likely to evolve into teams of multi-agent systems that interact with other tools, vendors, and even customer-owned agents. In both cases, complexity will grow, testing today’s governance and oversight models. Banks should be ready for a future where agent ecosystems are as common as APIs.

Thankfully, banks don’t need an entirely new playbook to get started. Instead, they can extend existing risk frameworks to safeguard agentic systems now. Priorities should include shared standards for identity and auditability, safer orchestration patterns, and clear rules defining when agents can negotiate terms, commit to decisions, or escalate behavior. Taken together, these steps can help banks adopt agentic capabilities with confidence as they move toward an increasingly autonomous, AI-enabled economy.