Fact or fabrication? AI is blurring the line when it comes to people and work

In the age of generative AI, it’s getting more difficult to know what is true, relevant, or meaningful about people and work. How can we manage disinformation at scale?

Organizations are losing trust in data about workers and work at the very moment they depend on it most.1 For decades, data has been a fundamental substrate of modern organizations—an essential resource that powers decision-making, enables predictive insight, and sustains resilience. But data without trust has the potential to do more harm than good.

Generative artificial intelligence and agentic systems can now produce content at a staggering scale, potentially blurring authorship, amplifying bias, and creating echo chambers where people are only exposed to information that reinforces existing beliefs. The challenge of distinguishing what is real from what is fake is growing, and this problem will likely worsen over time.

For example, according to SEO firm Graphite, as of May 2025, more than half of new web articles were generated primarily by AI,2 up from just 5% before ChatGPT.3 Though Graphite reports that 86% of the top-ranking pages on Google are still human-written,4 this synthetic wave could contaminate data quality for everything from SEO to model training.

Many organizations are now questioning the legitimacy of data about both people and their performance at work. How reliable is the data we have on our workers’ skills and capabilities? Can we trust the resumes of job candidates?

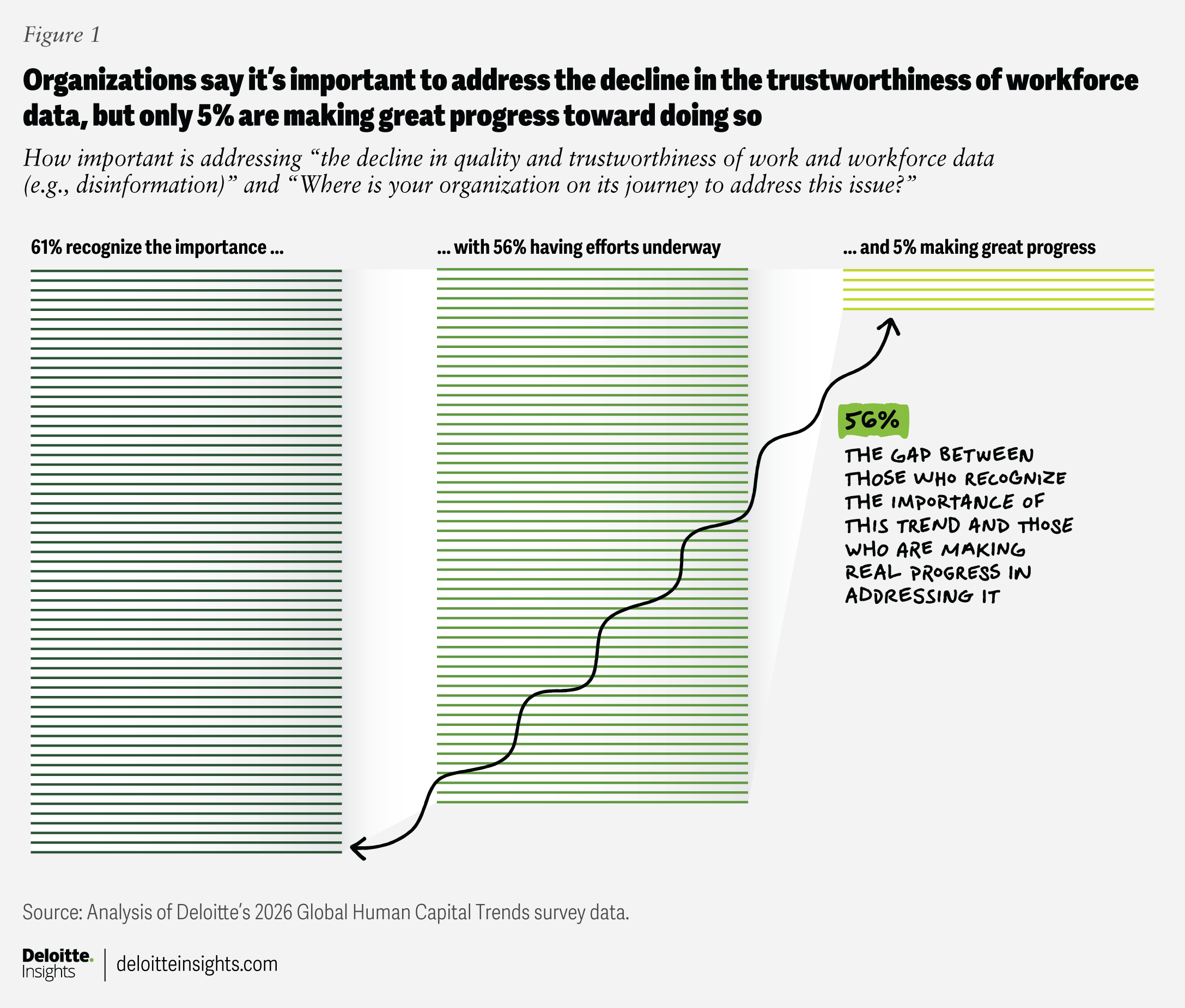

This is not a distant technological problem; it is a pressing business risk that could affect an organization’s brand, reputation, finances, and operational performance. Yet, according to Deloitte’s 2026 Global Human Capital Trends survey, only 5% of organizations are making great progress in addressing the decline in quality and trustworthiness of work and workforce data (figure 1). To address these challenges, organizations should consider expanding beyond cybersecurity to disinformation security and establishing a digital trust pact to protect worker-related data.

The coming AI storm

Organizations have long worked to create a clean, single source of truth for their people data. Despite progress, the rise of AI may introduce new complications.

The erosion of authenticity

Trust in data or information comes from knowing that it’s authentic—that it’s unaltered and originates from a verified source. But AI-generated content is challenging authenticity, often making it difficult to distinguish between genuine human talent and sophisticated fabrications.

This is a particular problem in talent acquisition. Data in talent markets is more abundant than ever, but identity and capability are often unclear. Our 2026 survey reveals that 95% of executives are concerned about the accuracy of the data gathered on candidates’ skills and capabilities—and with good reason: Just over a third of workers admit they regularly use AI to embellish their personal profiles.

AI-generated resumes can exaggerate a candidate’s job scope or skills, invent quantifiable results, or align content too perfectly with a job description, suggesting deeper expertise than the candidate actually has. Likewise, candidates can submit portfolios—designs, writing, or code—they didn’t personally create.

Authenticity is further strained by synthetic identities. AI can now fabricate entire candidates, complete with deepfaked interviews. One security firm, for example, interviewed an AI deepfake candidate and discovered the deception in part by asking them to perform a simple physical gesture—waving a hand in front of their face—that the bot could not execute, according to reporting in The Register.5 A projection by Gartner suggests that by 2028, one in four job seekers could be artificial,6 raising not just hiring concerns but risks of malicious infiltration.

Even when the candidate is a real person, interviews are becoming harder to trust. Employers report that AI-assisted responses mask applicants’ true capabilities, leading to disappointing performance on core human skills once hired.7 Some organizations, including Google, are considering bringing back in-person interviews to reassert authenticity.8

Meanwhile, automation has created a “bot-versus-bot” dynamic: candidates use AI to mass-generate applications and complete assessments,9 while employers use AI to screen them.10 The result is résumé noise and “hiring slop,” where indicators of genuine human experience are lost.11 The rise of ghost jobs—in 2024, 4 in 10 organizations posted jobs with no intention to hire, according to Resume Builder—only compounds the sense that talent markets are awash in inauthentic information.12

But the issue extends beyond talent acquisition in the workplace. Consider how AI-driven deception has already enabled multimillion-dollar frauds, with cybercriminals, for example, using deepfake technology to pose as a company’s chief financial officer in a video conference, ultimately convincing a worker to pay out $25 million.13

While organizations are increasingly aware of external misinformation risks, internal data quality issues are often neglected. Nearly half (48%) of executives in our 2026 survey worry that AI may introduce misinformation directly into company datasets, where small inaccuracies can cascade into major operational and ethical failures.

The erosion of agency

As authenticity crumbles, so too does agency—the clear, unambiguous link between an action and its author. It is becoming more difficult to determine what work is human-created and what is AI-generated, fueled in part by a growing shadow economy of unregulated AI tools. Forty-one percent say they have used AI to automate part of their job, often without employer awareness.

The result is a parallel data ecosystem in which AI obscures or simulates human contributions at work. Not surprisingly, 80% of executives in our survey are concerned that workers are using AI to appear more productive than they are. If we don’t know who did what, how do we reward and value workers?

Agency is also eroding due to the evolution of the technology itself. AI is moving from a supportive tool to a co-author of work, blurring authorship. When AI-generated outputs become indistinguishable from purely human work, it can be challenging to accurately assess workers and their work. Should human and machine contributions be evaluated jointly? Should disclosure of who—or what—created key work products be required? Or does the distinction matter at all when the outcome is strong?

The erosion of critical judgment

Perhaps the most dangerous long-term threat is the erosion of cognitive capabilities. As workers increasingly rely on AI to perform tasks, there is growing concern that they may lose critical judgment14 and domain expertise,15 disempowering and deskilling themselves in the process. In our survey, 42% of executives say they are already concerned about employees becoming overly dependent on AI for essential cognitive tasks.

“People are treating AI as a technology that provides answers. Rather, we need to see AI as a thought partner who might not always have 100% accurate answers—if we view it as a knowledge partner, then a light switch goes off,” says Michael Ehret, senior vice president and chief people officer at Walmart.16

Two major risks emerge under these conditions:

- Workslop: Emerging research reported in The Wall Street Journal indicates that AI doesn’t always level performance—it amplifies it.17 Experienced workers can use AI to extend their expertise, while less-skilled workers are more likely to generate “workslop”: passable but shallow outputs that mask weak reasoning and slow their own development.18 Once this low-quality work enters organizational data, AI models begin learning from it, contaminating training sets in ways that later training can’t fully undo.19

- The AI echo chamber: AI tools increasingly mirror a user’s past inputs, tone, and preferences. Instead of broadening perspectives, AI may narrow them, reinforcing existing beliefs and organizational norms. For example, if a marketing professional often frames campaigns around one audience type, AI is likely to suggest similar strategies rather than varied or unconventional approaches. Likewise, if AI is trained on internal company data—such as reports, policies, emails, and prior projects—it inherits the culture, norms, and blind spots of that organization, reinforcing “the way we’ve always done things.” Over time, workers may receive fewer challenges to their thinking and more validation of what they already assume, leading to digital groupthink and a further decline in independent judgment.

How can leaders and workers address these challenges with work and worker data to help establish authenticity? There are two potential paths: a mindset shift to disinformation security and taking steps to help workers evaluate what’s real and what’s not.

Disinformation security: Creating a new digital trust pact

As authorship, agency, and judgment come into question, a shift from cybersecurity to disinformation security is essential to protect the authenticity of work and worker data. Organizations should no longer merely protect systems from external threats; instead, they should also safeguard the integrity of worker-related data against manipulation and fabrication. This means creating a new digital trust pact with the following practices:

Implementing AI lineage mapping

AI is only as reliable as the data it is trained on, and unreliable data can create problems even when internal systems seem sound. One media company, for example, is actively cleaning and validating its data to support ethically trained AI systems for internal and client use. Explains the chief human resources officer: “The most important thing we can do to be effective with AI is to get the data right. We’ve been experimenting internally and working with outside experts to learn what they are doing. And the more we learn, the more we realize the potential but also the limitations. Without authentic, reliable data, we may not only be at risk, but we won’t realize AI’s potential value.”20

AI itself can support this work through automated lineage mapping: tracking the origin, transformation, and use of data across training and inference. Technologies such as blockchain can further strengthen lineage by creating immutable, time-stamped records of every data transaction, embedding trust directly into the architecture.

A group of human resources vendors is working together to increase trustworthiness and interoperability in talent data using blockchain.21 Related, blockchain skills-verification solutions aimed at verifying the credentials of job seekers and project team members are emerging. Organizations can verify a person’s work experience, skills acquired, job titles, and more, and workers can then decide which parts of their verified credentials they want to share with prospective employers and for how long.22 The public sector is getting on board too; SkillsFuture Singapore issues tamper-proof digital certifications to verify the authenticity of workforce skills and qualifications.23

Conducting AI risk simulations

Discover where your organization’s particular vulnerabilities lie by running stress tests or red-teaming exercises. These exercises can help identify potential failure points, including data poisoning, model bias, or misalignment with organizational objectives, before they cause significant damage.

While AI risk simulations are still an emerging application, many cyber companies are now creating virtual platforms that allow clients to simulate potential scenarios. For example, Identifi Global has created a platform with live biometric verification, audit trails, and fraud-checking mechanisms that allow human resources departments to simulate deepfake candidates and train their workers to spot them. One client was able to avoid a hiring security breach when the platform helped recruiters identify background inconsistencies during the final interview stage for a C-suite role. Manual follow-up confirmed a deepfake impersonation attempt, saving millions in potential losses.24

Real-time, dynamic identity authentication

When cybersecurity company Pindrop realized it needed to take action, it discovered that 1 in 6 job applications in its own organization was showing clear signs of fraud and that many candidates were using deepfake technology during live interviews. Candidates were bypassing every existing layer of defense: things like resume scanning, keyword matching, reference checks, and authentication checks. To address the issue, the company developed tools that allow talent acquisition and security teams to authenticate the candidates’ identities continuously, ensuring that the person on screen is the same individual throughout the process. It also developed technology to detect signs of synthetic media or third-party coaching, as well as a way to flag discrepancies across assessments, interviews, and onboarding steps.25 It is increasingly important to not only verify the identity of humans but also that of AI agents.

Judgment calls: Helping workers understand what’s real

While the technical tools are evolving quickly, it’s important to note that they are still probabilistic, not deterministic. Google chief executive Sundar Pichai says in an interview with the BBC, “We take pride in the amount of work we put in to give us as accurate information as possible, but the current state-of-the-art AI technology is prone to some errors.”26 Human judgment skills still play an important role in determining what’s real. Organizations can build these skills with the following practices:

Educate hiring managers and recruiters

Hiring managers may not be aware of the rise in fake job candidates. Ben Sesser, CEO of BrightHire, tells CNBC, “They’re responsible for talent strategy and other important things, but being on the front lines of security has historically not been one of them.”27 It is particularly critical to ensure that recruiting and talent acquisition processes account for AI-related risks, as well as synthetic or unverifiable candidate data.

Training for reflexivity and judgment

As AI becomes more embedded in work, organizations should build reflexivity—the ability to reflect on one’s own actions and thinking—as a core skill, encouraging individuals to question how AI influences their judgment and decision-making.

“As we increase AI adoption, we’re vigilant about maintaining human judgment, especially for complex or sensitive decisions,” says the vice president of global talent strategy and succession at one multinational consumer goods company. “A lot of our attention is on striking the right balance: We want to automate where possible, but we’re keenly aware of when human oversight is nonnegotiable.”28

Promoting transparency in work outputs

Organizations may also consider clearly disclosing when work products have been created or cocreated by AI to help others use judgment in evaluating them.

For example, Autodesk has created AI transparency cards. Modeled after nutrition labels on food packaging, each card clearly displays how AI was utilized in the creation of the content, including what the model did, what data was used, how the data is protected, and what safeguards were in place.29

Other organizations, like one large pharmaceutical company, are experimenting with putting labels on everything from emails to slide decks, disclosing on a spectrum how much of the content was produced by humans versus AI.30

A path toward more trustworthy data

Distrust in work and workforce data will likely persist—even as organizations strengthen validation and authentication systems—because gen AI will continue to reshape how information is produced and perceived. The real challenge is not only technical but also foundational: preserving meaning, authorship, and accountability in an age of synthetic intelligence.

Organizations that fail to address these shifts risk eroding the foundations of human judgment and organizational culture. Overreliance on AI without critical oversight can lead to decisions that are efficient but not ethical, fast but not fair, measurable but not meaningful.

In such environments, human-AI collaboration becomes accountable by design: technology accelerates insight, while humans remain the ultimate stewards of interpretation and judgment.

Methodology

Deloitte’s 2026 Global Human Capital Trends worked in collaboration with Oxford Economics to survey more than 9,000 business and human resources leaders across many industries and sectors in 89 countries. In addition to the broad, global survey that provides the foundational data for the Global Human Capital Trends report, Deloitte supplemented its research with worker-, manager-, and executive-specific surveys to uncover where there may be gaps between leader and manager perception and worker realities. The survey data is complemented by more than 50 interviews with executives and subject matter experts from some of today's leading organizations. These insights helped shape the trends in this report.