Business and IT leaders report AI agents are scaling faster than their guardrails

Only 21% of enterprises responding to a recent multicountry Deloitte survey report having mature governance in place to manage the risks of agentic AI

The race to build an artificial intelligence agent “workforce” is on, and winning might not be about moving first, but about putting safety first.1 It might feel like a worthwhile shortcut to skip designing and enforcing guardrails upfront in favor of adoption and experimentation, but it’s likely a slower, costlier route to try to retrofit oversight later—not to mention the potential risks to the brand and bottom line that organizations could encounter.2

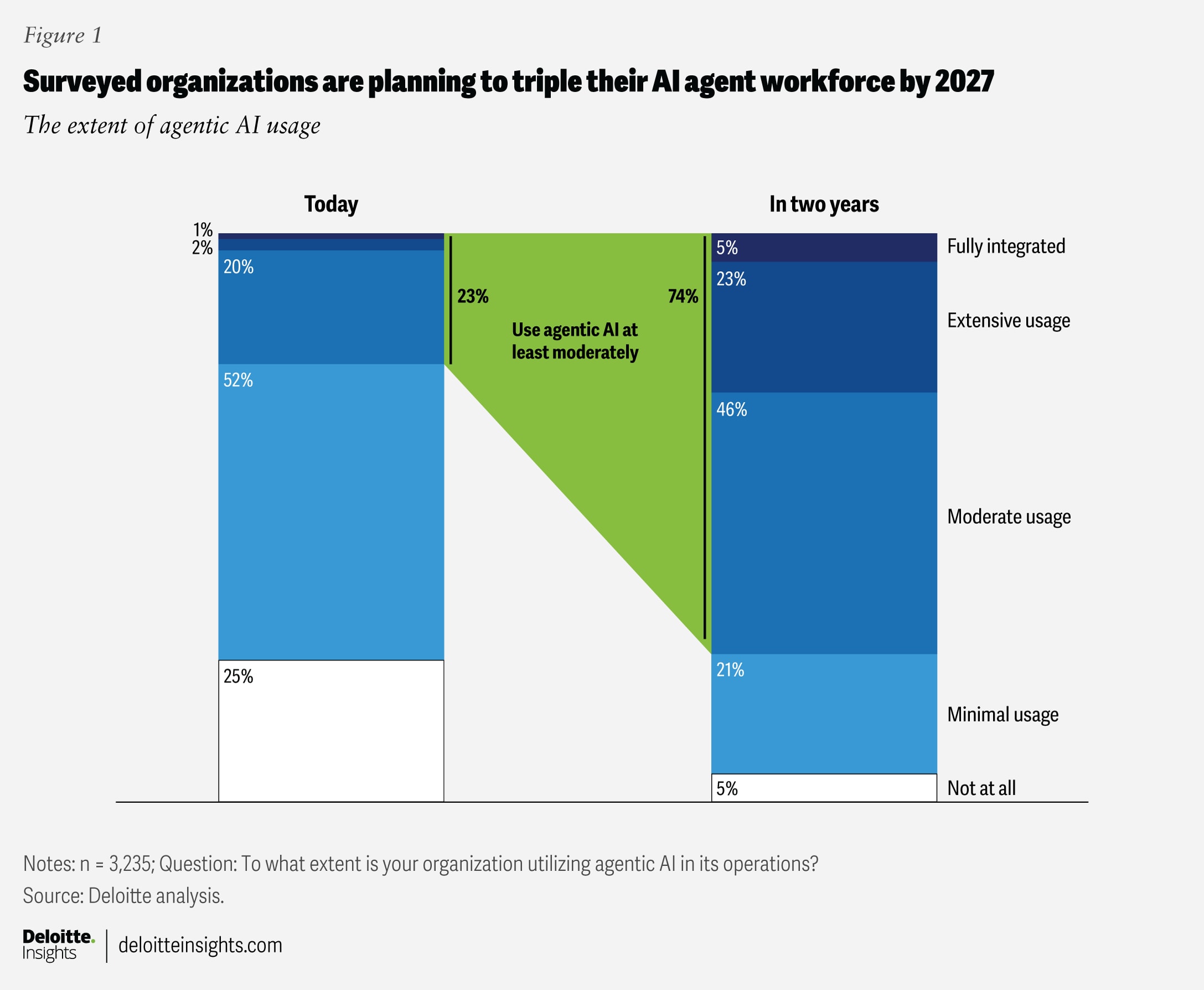

According to a recent Deloitte survey of 3,235 information technology and business leaders from 24 countries across the Americas, Asia Pacific, Europe, and the Middle East—all of whom have direct involvement in their organizations’ AI programs—only 21% of respondents say their organizations have a mature governance model in place for agentic AI. The survey findings, published in Deloitte’s 2026 State of AI in the Enterprise report, reveal that agentic AI usage is scaling quickly among respondent organizations. By 2027, 74% of respondents expect their companies to be using AI agents at least “moderately.” Of those respondents, 23% expect to use it “extensively,” and 5% expect to fully integrate agents as a core component of their business operations (figure 1). Yet approximately 80% of the organizations surveyed currently lack mature governance capabilities for agentic AI, such as clear boundaries for agents that define which decisions they can make independently versus which require human approval, real-time monitoring systems that track agent behavior and flag anomalies, and audit trails that capture the full chain of agent actions to help ensure accountability and enable continuous improvement.3

If not properly monitored and centrally controlled, AI agents can make unseen mistakes, work at cross purposes, reveal sensitive information, offend a customer, invite a cyberattack, and more. These risks could compound if governance challenges aren’t solved before enterprises scale pilots to full production.

According to the survey findings, companies that are succeeding with agentic AI are taking a measured approach, starting with lower-risk use cases, building governance capabilities, and scaling deliberately. This includes cross-functional governance structures that bring together IT, legal, compliance, and business unit leaders to set policies, monitor performance, and manage escalations.4

Rushing to deploy AI agents widely before establishing these governance foundations could expose organizations to significant and potentially costly risks—while also likely negating the competitive advantage that AI agents otherwise could afford.