Cognitive government accelerated: From aspiration to operational reality

AI is enabling governments to sense, predict, and orchestrate across systems for faster, smarter decisions and greater readiness

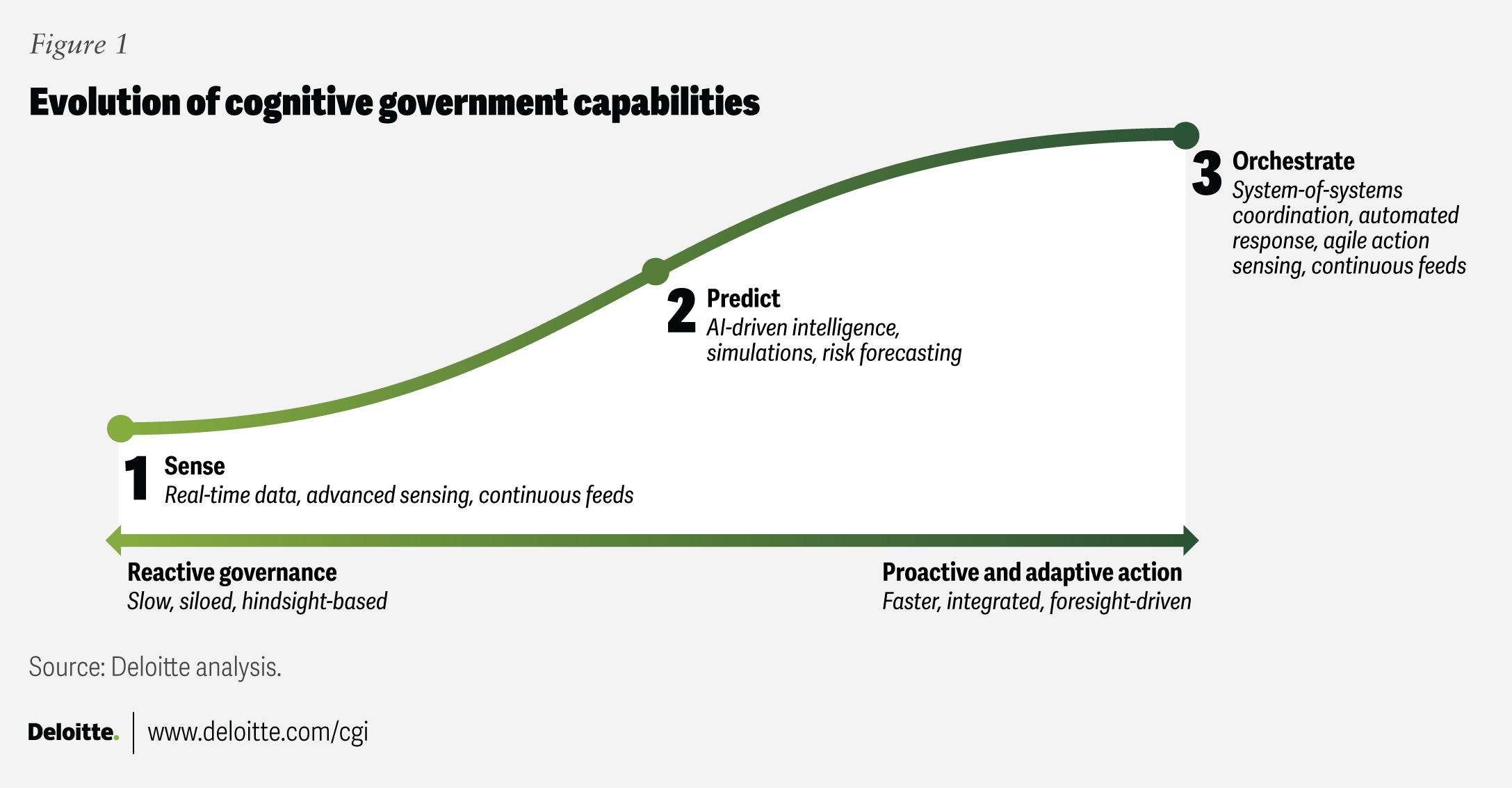

AI is enabling governments to sense emerging risks, predict outcomes before committing resources, and orchestrate complex systems in real time.

In an era of accelerating technological change, constrained budgets, and rising expectations, governments can no longer rely on reactive models of governance. Success increasingly depends on the ability to detect weak signals early, simulate choices before acting, and coordinate across systems with agility.

This marks a fundamental shift: from responding to events after they unfold to anticipating and shaping them.

Deloitte first introduced the idea of “government as a cognitive system” in 2021. Since then, sensing capabilities have matured rapidly.1 Real-time data streams, AI-powered analytics, and digital platforms are no longer experimental. What was once situational awareness is evolving into predictive insight and, increasingly, large-scale orchestration.

A new cognitive reality is within reach. With digital twins, advanced simulation, real-time sensing, and agentic workflows, governments can move from fragmented responses to coordinated, anticipatory action.

This trend explores how cognitive capabilities are accelerating across three dimensions: sensing, simulation, and orchestration (figure 1).

Signals: Cognitive government accelerated

Trend in action

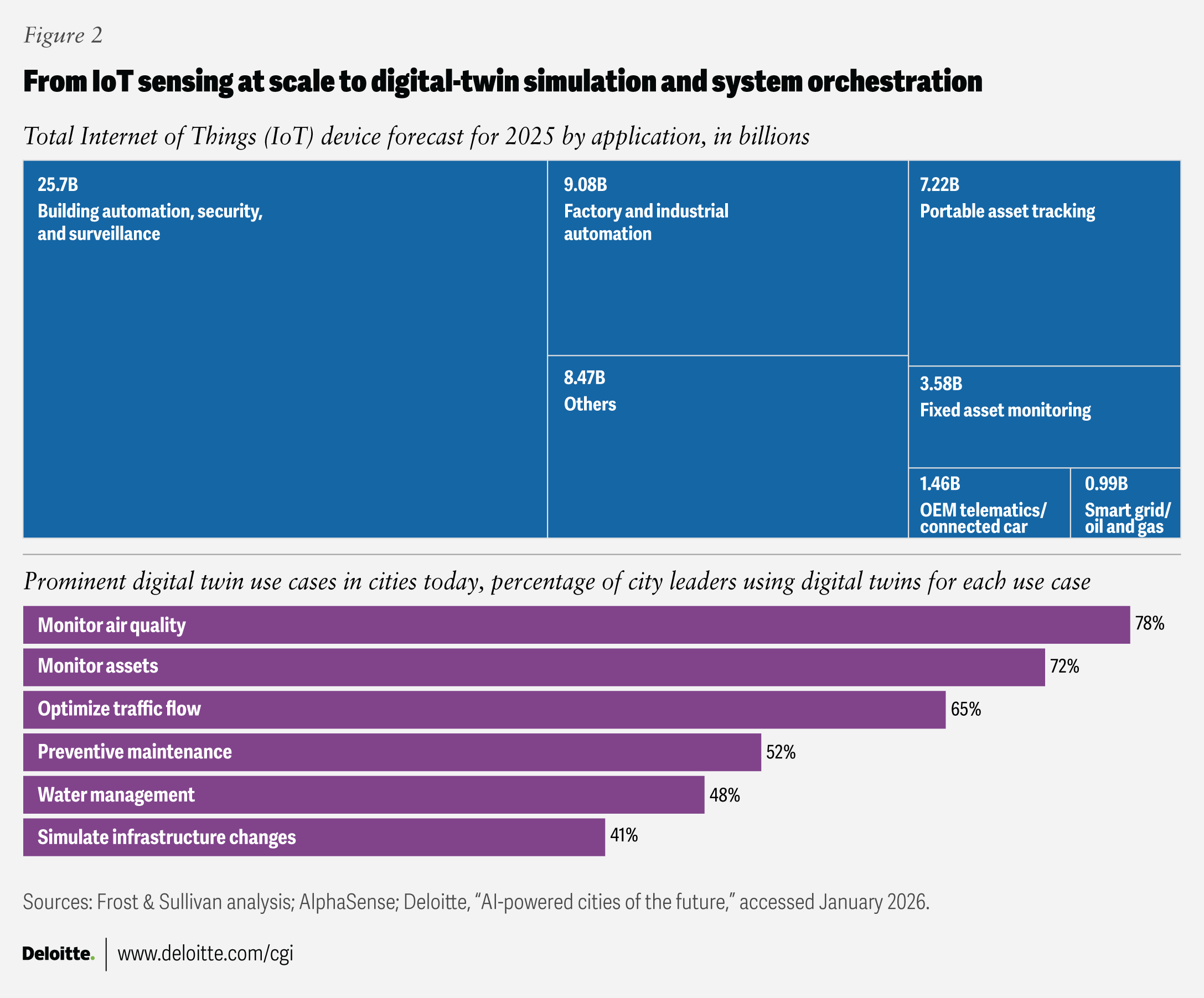

Advanced sensing optimizes lead times

Governments are rapidly expanding their ability to sense emerging risks in real time. Government sensing capabilities have scaled dramatically in the past decade, largely driven by what futurist Peter Diamandis calls the “trillion-sensor economy,” a massive convergence of terrestrial, atmospheric, and space-based sensors.2 Networks of sensors across infrastructure, transport, utilities, and public safety now generate continuous data streams rather than periodic reports.

Artificial intelligence can further turbocharge government sensing by making sense of disparate, high-volume data sources—such as Internet of Things streams, satellite imagery, remote sensing, mobile telemetry, health wearables, administrative data, and social data—to detect anomalies sooner and forecast risk with greater precision.3 These accelerated capabilities can upend traditional sense-and-response models across disaster management, extreme weather warnings, health emergencies, and asset maintenance.4

The value of advanced sensing lies in lead time. Even seconds or minutes of early warning can dramatically improve outcomes.

In Japan, deep-learning models trained on thousands of tsunami simulations can now estimate wave height and coastline impact in seconds rather than minutes, accelerating evacuation decisions.5 In Sweden, pilot programs have used drones to deliver automated external defibrillators to suspected cardiac arrest cases. During trials, drones arrived an average of more than three minutes before ambulances—a critical margin in life-saving response.6

AI-enabled advanced sensing is also transforming infrastructure management, one of the top AI use cases identified in our survey of city leaders.7 The New York City Metropolitan Transportation Authority’s TrackInspect prototype uses smartphone sensors mounted in subway cars to capture vibration and sound data. AI models analyze the data in real time, flagging potential track defects for human inspection. In early pilots, the system detected the vast majority of known defects while significantly reducing manual inspection time.8

Across domains, the shift is clear: from periodic inspection to continuous monitoring; from reacting to breakdowns to anticipating them. With stronger sensing capabilities, governments gain the time and confidence needed to move from response to prevention.

Situational awareness is the first step toward a deeper set of capabilities that enable government agencies not only to sense but also to predict and anticipate events with increasing accuracy.

Simulations improve execution decisions

Sensing provides early signals. Simulation allows leaders to test choices before acting.

Digital twins—virtual representations of physical systems—are moving from experimental tools to operational platforms. By combining real-time data with scenario modeling, governments can evaluate infrastructure investments, land-use changes, emergency responses, or service redesigns before committing public resources.9

In Singapore, Virtual Singapore integrates data on buildings, mobility, utilities, and infrastructure into a shared digital environment.10 Agencies can test how changes in one system—such as transport routing or drainage design—affect others, reducing surprises and improving coordination.11 For example, by feeding live telemetry from buses, drains, signals, and bins into the platform, crews and controllers can adjust routes, schedules, and timing in near real time.

Dubai is advancing a similar approach through its Dubai Live ecosystem, using simulation to assess the impact of proposed policies and infrastructure investments before implementation—shifting from reactive management to “proof first, policy next.”12

In the United States, the Broward Metropolitan Planning Organization’s Smart Metro platform combines housing, zoning, population, and transportation data into a unified geospatial system. Planners can run plain-language queries, model future scenarios, such as flood impacts on current and future infrastructure, and visualize trade-offs across growth, mobility, and resilience.13 By combining data, analytics, and simulation, Smart Metro can help forecast traffic, predict land use trends, and model flood impacts on current and future infrastructure.

Helsinki offers a complementary model. Its Energy and Climate Atlas serves as a publicly accessible digital twin of the city’s building stock. Residents and businesses can explore retrofit options, estimate energy savings, and identify pathways to decarbonization. In this case, simulation is not just a planning tool for officials—it becomes a shared platform for public engagement and climate action.14

As these systems mature, they are becoming easier to use and more powerful. Generative AI interfaces now allow planners to query complex models in natural language. Emerging agentic workflows can automate routine coordination tasks while keeping humans in control of high-stakes decisions.

“Today we still think of digital twins as a mirror of the real world,” says Justin Anderson, managing director of data and digital at Connected Places Catapult. “I think we will see that shift from a twin being a representation of the world to a decision-making, autonomous piece of a broader digital nervous system.”15

The shift is subtle but profound: Digital twins are evolving from static representations into active decision-support systems that improve execution before policy meets reality.

Predictions for people, not just places

Not every challenge requires a city-scale digital twin. Many of government’s hardest problems involve predicting outcomes for people—not infrastructure.

Advances in data and AI are enabling agencies to move from reactive case management to earlier, targeted intervention. Rather than waiting for crises to emerge, governments can identify elevated risk sooner and allocate support more effectively.

Health care has long used triage methods to prioritize scarce resources. Today, those approaches are becoming more precise. Improved data, advanced analytics, and machine learning allow agencies to identify patterns across large populations while still relying on human judgment for final decisions.16

The US Department of Veterans Affairs’ Recovery Engagement and Coordination for Health program illustrates this shift. A suicide-risk algorithm analyzes electronic health records to identify veterans at elevated risk.17 Clinicians then review cases, reach out directly, and codevelop safety plans. The model does not replace professional judgment—it directs attention where it may matter most. Evaluations show increased outpatient engagement and a measurable reduction in documented suicide attempts.18

Beyond identifying risk at the individual level, governments are beginning to model how entire populations may respond to policy change. Agent-based models have long helped simulate how individuals or households react to new benefits, taxes, or mobility rules by modeling interactions among many small “agents.”19 These models are powerful but often limited in scale and typically used to analyze past behavior.

The next leap is toward large population models. Powered by modern AI and greater computational capacity, large population models simulate entire populations at much larger scale and with richer data. Rather than looking backward, they are designed to anticipate how policies or technologies may reshape systems before those changes fully unfold.20

The Massachusetts Institute of Technology and Oak Ridge National Laboratory’s iceberg index, for example, simulates interactions among millions of US workers to explore how AI adoption may reshape tasks, skills, and labor markets across counties and states.21 Rather than analyzing the past, it allows policymakers to test potential futures—informing long-term investment in training and workforce strategy before shifts fully materialize.22

The common thread is anticipatory governance. Whether identifying individuals at risk or modeling labor-market transitions, predictive tools allow governments to act earlier, target support more precisely, and design policies with clearer insight into downstream effects.

As these capabilities mature, the focus will remain on pairing algorithmic insight with human accountability—ensuring that predictions inform decisions without replacing judgment.

Enablers and accelerators

Turning cognitive ambition into operational reality involves a few deliberate shifts.

- Make simulation routine, not exceptional.

Require scenario modeling for major policy, capital, and operational decisions. Leaders should see tested alternatives before committing public resources. - Strengthen the data backbone.

Reliable sensing and prediction depend on high-quality, interoperable data. Invest in shared data standards, governance, and cross-agency integration so signals flow continuously and securely. - Institutionalize experimentation.

Stand up ongoing pilots in predictive maintenance, algorithmic triage, and policy simulation. Treat cognitive capability as something that is practiced and refined, not deployed once. - Clarify human–machine roles.

Define which decisions can be automated, which require human review, and which must remain fully human. Codify risk-based decision rights to maintain accountability and public trust.

Toward 2030: The future this trend could unlock

Cognitive capabilities are embedded into the daily fabric of government operations. Intelligence is no longer confined to specialized analytics teams—it becomes broadly accessible, continuously updated, and directly connected to action.

- Intelligence that is democratized. Advanced analytics platforms allow policymakers, frontline staff, and even residents to explore scenarios through natural-language interfaces. Simulation and forecasting tools become easier to use, strengthening transparency and shared accountability.

- Cities as coordinated systems. Urban digital twins evolve into integrated orchestration platforms. Transportation, energy, water, housing, and emergency systems operate as interconnected networks rather than isolated departments. Human operators supervise coordinated systems instead of manually stitching together fragmented data feeds.

- Cognitive operations centers. Governments establish cognitive operations hubs that bring together sensing, simulation, forecasting, and coordination. Foresight becomes continuous rather than episodic, enabling leaders to anticipate and adjust in weeks rather than years.

- Agentic workflows support constrained workforces. AI agents automate routine coordination tasks—intake, scheduling, triage, follow-up—under human supervision. This expands capacity in resource-constrained environments, allowing public servants to focus on judgment, complex problem-solving, and citizen interaction.

- Decision design as a core capability. Governments cultivate internal expertise in defining problems clearly, modeling trade-offs, and translating insight into action. Strategic foresight becomes an always-on discipline, informed by weak-signal detection and continuous analysis.

In this future, the measure of success should not be smarter dashboards or more sophisticated models. Instead, it should be faster response times, better-prepared systems, and stronger public trust, enabling cognitive government to move from aspiration to operating reality.

My take

Moving from writing plans to continuous strategizing

Professor Bert George, professor of public and nonprofit strategy, Department of Public and International Affairs, City University of Hong Kong

As governments adopt the emerging cognitive toolkit, there should be a fundamental shift in how public organizations operate.23 For too long, strategy was reduced to “writing plans,” a static exercise of producing strategic documents every few years.

Today, technology empowers public sector executives to move beyond simply creating documents, transforming “strategizing” into an ongoing, dynamic practice that takes into account purpose, analysis, place, and implementation (PAPI for short).24 Governments should develop the capability to strategize in real time, allowing them to nimbly adapt to challenges as they arise rather than waiting for the next planning cycle.

Technology is also crucially transforming strategizing practices like strategic foresight. Governments must move away from relying solely on internal data and harness “collective intelligence”— not just government intelligence. True resilience requires a whole-of-society effort. By using technology to analyze broad societal data sources, governments can, for example, turn foresight into a collaborative capability that reflects the reality of governance, not just the interests of government.

At the same time, the barrier to entry for technology is lowering. The user experience of modern AI interfaces, especially large language models, now allows policymakers, public managers, and other professionals to interact directly with data. Instead of wading through complex, inaccessible technical reports, executives can now ask specific questions and receive immediate, useful insights. This democratization of data is essential. It ensures that technology supports strategizing without adding administrative burden or unnecessary red tape. Ultimately, this shift facilitates a government that is truly strategic.

My take

Bridging the gap: From data sensing to responsive action

Stephen Goldsmith, Derek Bok professor of urban policy and director of Data-Smart City Solutions, Harvard Kennedy School

Many of us joined in praising and emulating famous legacy data-driven management programs such as ComStat and CitiStat, which produced breakthroughs in operational performance. Yet they functioned primarily as top-down systems in which leaders held managers accountable based on retrospective metrics. Too often, a disconnect emerged between what was measured and actual changes in city operations. We now have a chance to close that critical gap today by moving from merely sensing problems via dashboards to actively improving outcomes on the ground.

Generative AI enables broader data use across the enterprise, extending insights beyond policy analysts in backrooms. By allowing natural language queries, specific issues—such as drainage outliers or pothole clusters—can be identified and resolved in real time. The objective is to harness the power of data by granting discretion to frontline workers, who are currently rule-driven, thereby transforming them into proactive problem-solvers capable of rapidly iterating on solutions.

Opportunities abound across a wide range of digital innovations. In infrastructure, for example, the value of merging digital and physical is immense. However, solutions require rethinking infrastructure economics, particularly with respect to life-cycle costing. A significant barrier to progress is that current procurement models often favor the lowest initial construction bid, thereby overlooking the long-term value of digital components, such as IoT sensors, that can predict failures in lighting or structural components. If digital infrastructure were viewed as a critical component of physical infrastructure, and procurement were reimagined to include digital components, it would enable “next-generation maintenance,” in which sensors drive efficiency rather than relying on reactive repairs.

Ultimately, the future of “cognitive government” will not be defined by the adoption of smarter tools or complex digital twins, but by tangible increases in responsiveness and public trust. The metric of success will be a reduction in time-to-response for citizen issues, or the use of data insights to preempt the problem altogether.