Making AI training work in government: A framework for tailored learning

Maximizing gains from AI calls for an AI-fluent workforce, which starts with government leaders matching training to employees’ roles, skills, and contexts

A data analyst, a procurement officer, and an information technology specialist walk into the same artificial intelligence training session. The analyst leaves eager to experiment, the procurement officer leaves unsure how it applies to her job, and the IT specialist leaves underwhelmed. The problem isn’t the people—it’s the premise. In government, not all workers need the same kind of AI fluency. Yet, most training programs still treat them as if they do.

This misalignment matters: Agencies are chasing the productivity gains AI can bring, but the path to those gains doesn’t start with technology—it starts with people. When employees understand, trust, and meaningfully apply AI in their daily work, its impact compounds. According to Deloitte research, when workers are confident using AI, their daily use rises more than 2.6 times, and their weekly time savings nearly double.1

Across the public sector workforce, however, confidence is still rare. Sixty-two percent of US state and local employees in a survey report they’ve never received AI training.2 And a 2025 Pew survey of US adults found that only 12.2% took a class or received AI training in the past year.3

And even where training exists, it often falls short. In a Skillsoft survey of 2,500 full-time employees, 43% named AI as their biggest skills gap, and 62% rated their organization’s AI training as average or poor.4

The problem clearly isn’t a lack of AI training. It’s that there may be too much training, leaving many workers unsure about which is the right fit for their roles. AI training that isn’t tailored to these realities is unlikely to build lasting AI fluency. Without fluency, AI’s promise of transformation will remain out of reach.

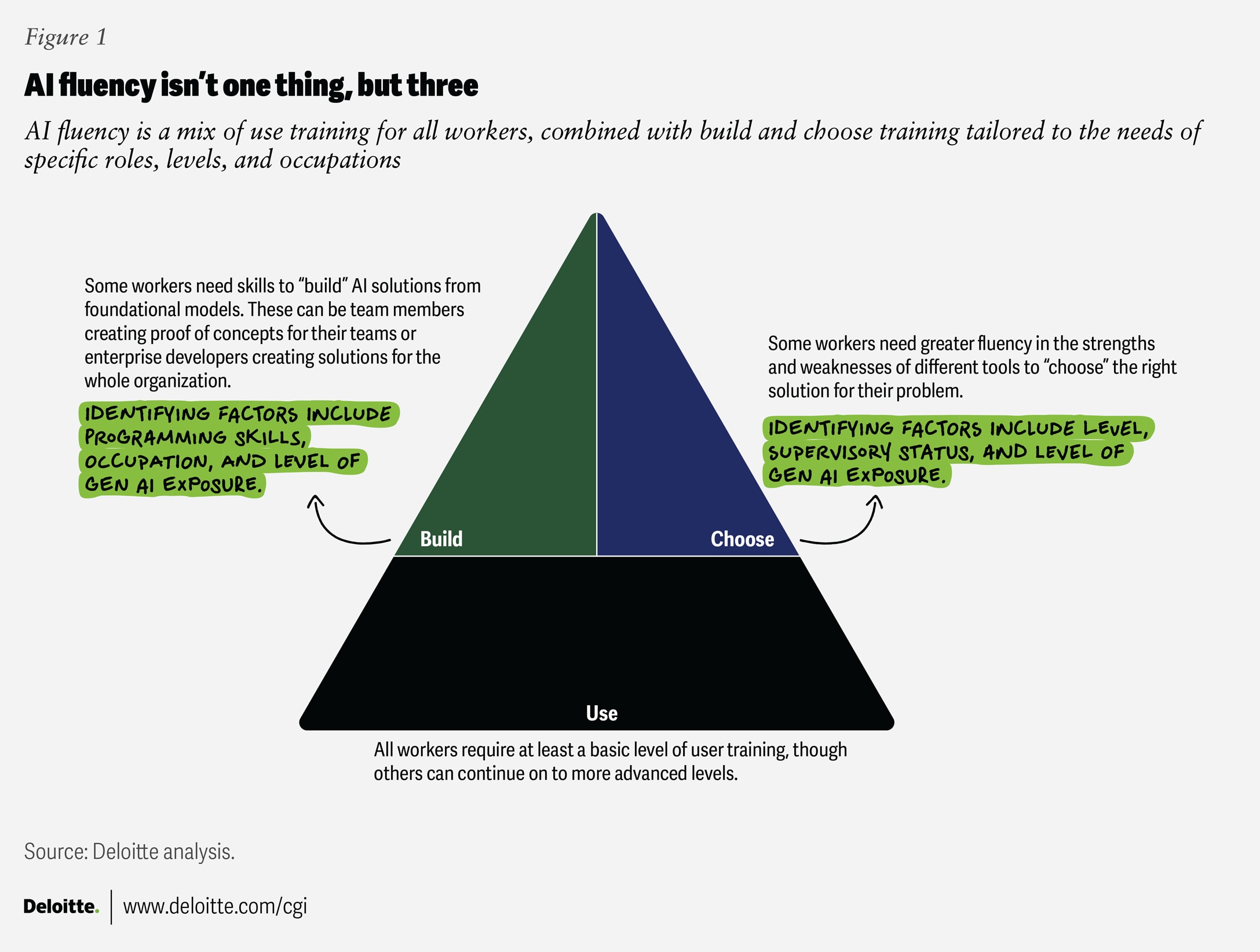

To help government agencies address this gap, the Deloitte Center for Government Insights developed an AI fluency framework—a way to tailor AI training to real workforce needs. It distinguishes between using, choosing, and building AI tools to match the right intensity of training to the right role and career level. With tailored training, employees are more likely to use AI more often, allowing organizations to get more from their AI investments.

A framework for tailored AI fluency

Types of AI fluency

AI fluency isn’t one skill. Like first aid, it has different levels, depending on whether someone is applying a bandage to themselves, helping another person, or working as a paramedic. In AI’s context, there are at least three main types of fluency (figure 1):

- Use fluency is about empowering employees to apply AI in their daily tasks. This kind of AI fluency is foundational across the workforce. It helps many employees overcome barriers such as uncertainty, lack of trust, and unclear guidance about when and how AI should be used.

- Choose fluency involves understanding the broader landscape of AI capabilities, trade-offs, and risks. It is especially relevant for those in supervisory or managerial roles who must “choose” the right mix of tools that align with their team’s work and goals.

- Build fluency represents the deepest level of expertise. This is for specialists and technologists who can design, develop, and implement custom AI solutions to tackle unique team or organizational challenges.

Intensity of AI fluency

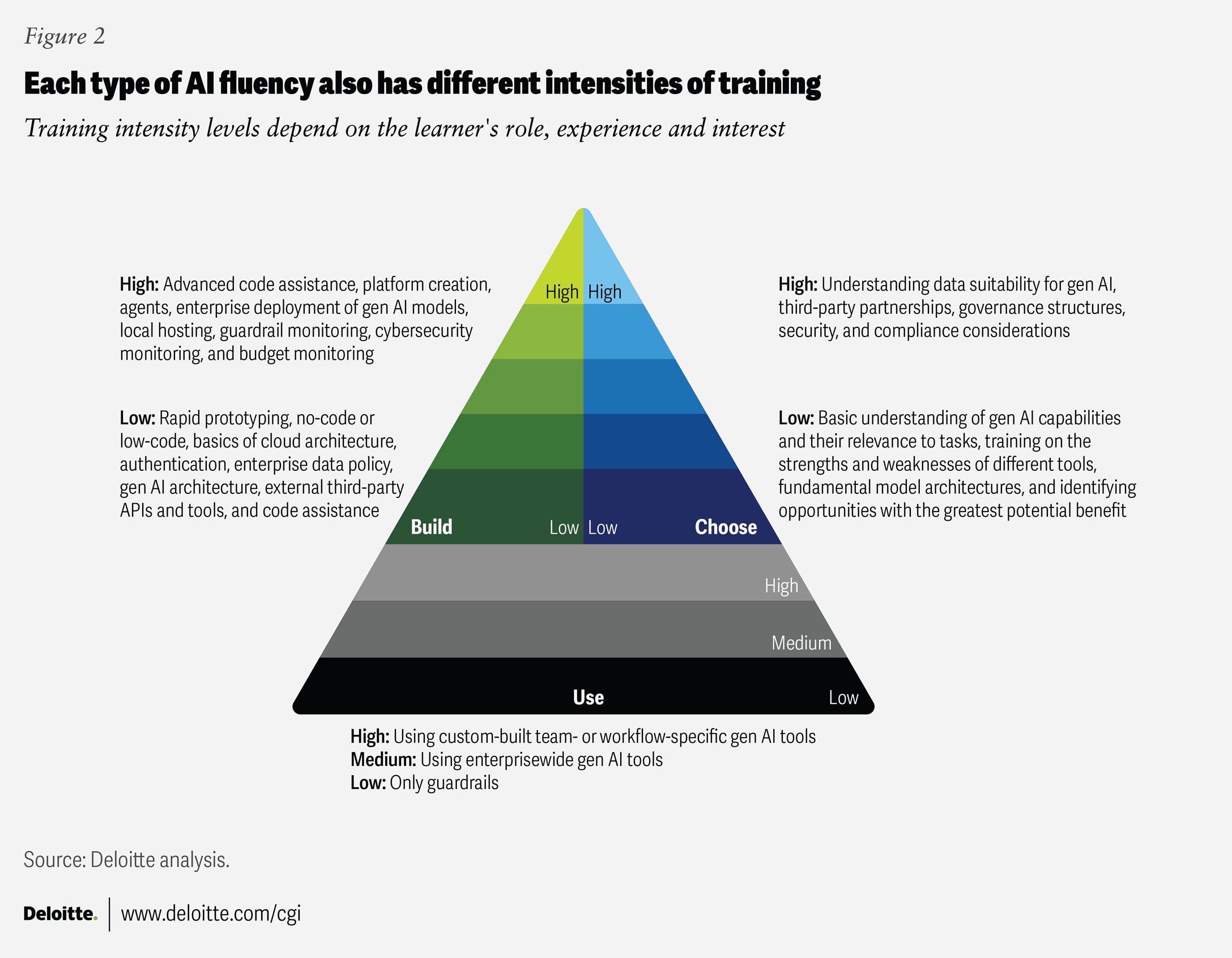

Types of AI fluency alone, however, are not sufficient. Each type has different intensity levels, depending on the learner’s role, experience, and interest (figure 2). For example, it is clear that nearly everyone needs at least a basic level of AI “use” training. But its intensity and format should differ. Highway maintenance workers, who may not interact with generative AI daily, likely need less intensive “use” training than marketing experts, who may be using it several times each day.

A similar principle applies to “choose” and “build” AI fluency. A business-line executive may need enough “choose” fluency to pick the right AI tools for their specific needs, while an IT executive may need higher-intensity “choose” training to evaluate the right architecture for the enterprise. On the build side, professional IT developers may need advanced technical depth, while “mission builders” (non-IT workers who use AI to develop tech) embedded within work teams may need lower-intensity, more hands-on training to create simple AI tools for their team’s daily work.

How to identify the right training for your workforce

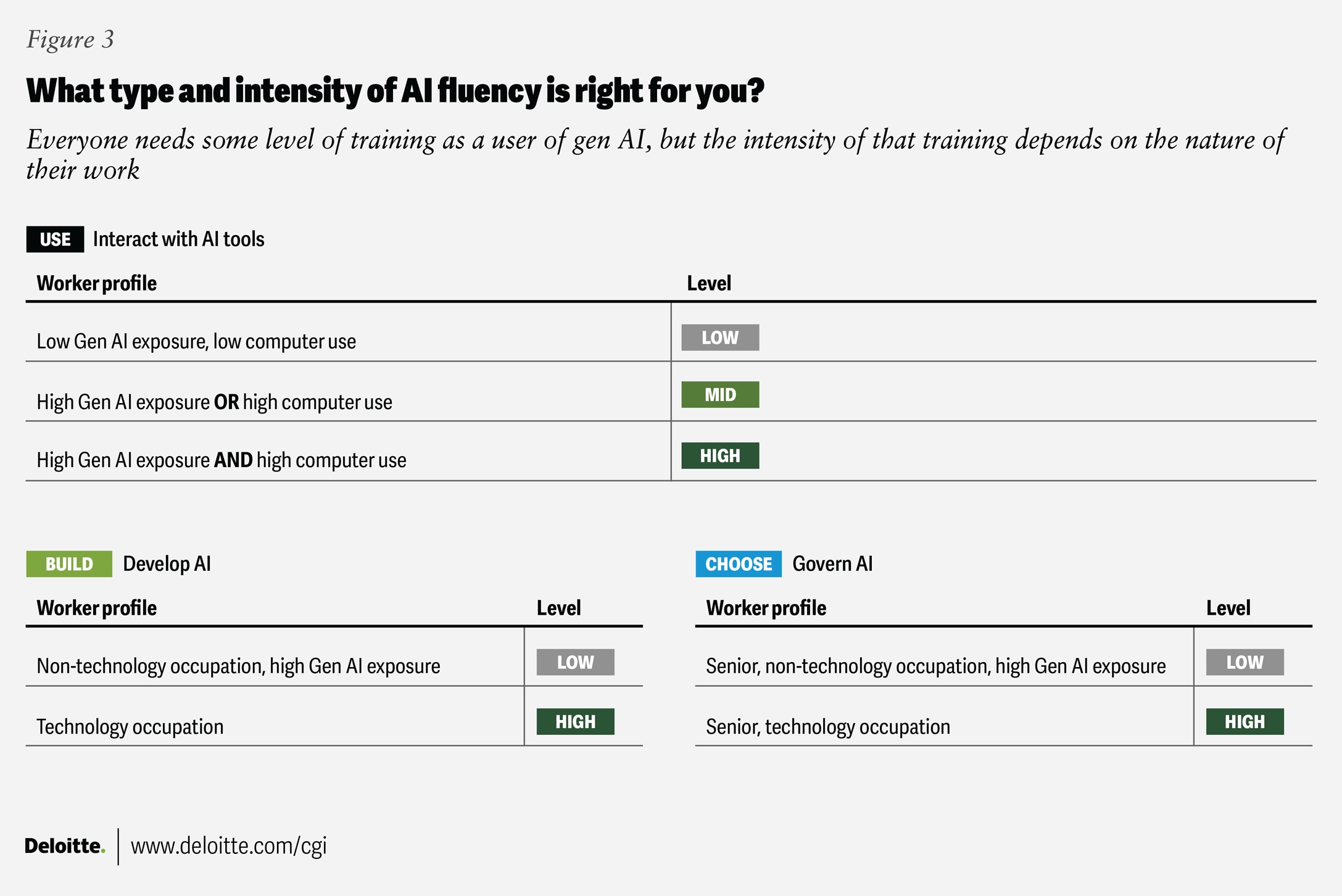

Using data from a government agency and Deloitte, we identified three factors that can help predict the type and intensity of training a worker needs (figure 3):

- Occupation

- Level of seniority or supervisory status

- Level of computer use in daily work

For example, occupations with higher gen AI exposure would need higher intensities of “use” training. Similarly, one’s level within the organization and supervisory status are good indicators of the intensity and depth of “choose” training. And finally, degree of computer and tech use is a helpful proxy for determining if workers might need high intensities of “choose” or “build” training.

Different agency, different workforce, different training

Every agency operates differently, with different missions, skills, and work environments. So, there’s no single optimal training mix.

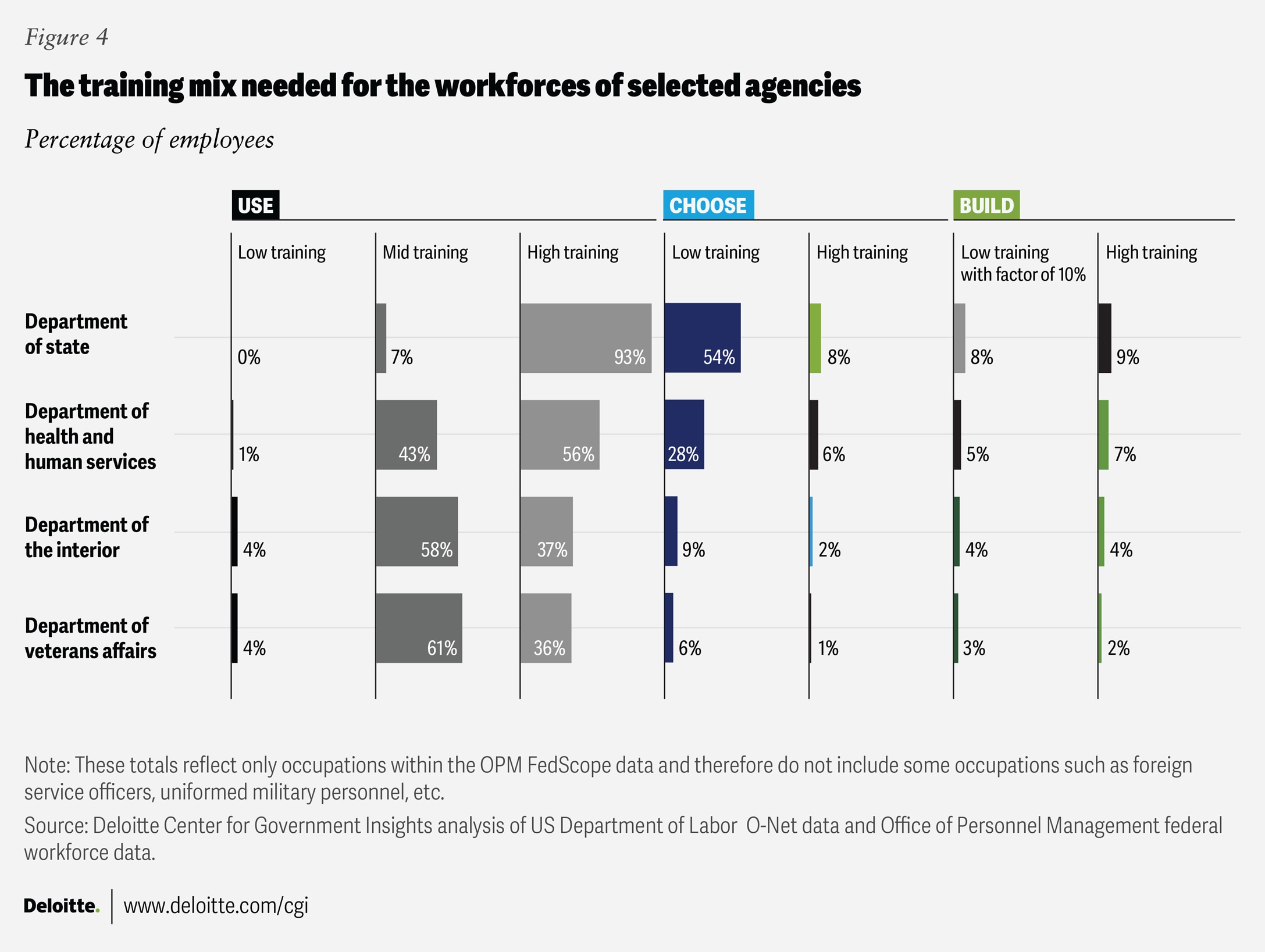

Leaders can use the framework’s two dimensions (type and intensity) to design personalized learning pathways that fit their people (figures 3 and 4).

For example, an agency like the Department of State, where much of the work is knowledge-based and computer-driven, will likely need a much higher percentage of its workforce to receive use/high training than an organization with more mixed physical and knowledge work.

The size of an agency’s IT workforce also shapes its training needs. Agencies with larger technical teams may require choose/high and build/high training. For example, between 2% and 9% of employees in the agencies shown in figure 4 could benefit from high-intensity “build” trainings.

One common challenge is identifying “mission builders” who need build/low training. Because this role depends more on curiosity and initiative than job title, leaders may rely on self-selection or expressions of interest. Generally, ensuring that at least 10% of eligible employees receive this level of training can create a sufficient number of mission builders across the organization.

Leaders don’t need to know which mission builders will be the right fit from the start. Employees who initially just want “use” or “choose” training may build confidence and progress toward opting for more build-oriented training.

And the results are real. From both Deloitte and government data, we observed a significant increase not just in users but in how much they used the tools for work. We saw statistics like a 75% increase in weekly average users and 56% increase in prompts following tailored training.

Create AI fluency that sticks

Organizations embarking on their AI fluency journey often encounter a seeming catch-22. Learners need role-relevant use cases to see the value of AI. Yet because AI adoption is often bottom-up, leaders may not know in advance which use cases will prove impactful. Three practical steps can help resolve this tension.

1. Be clear about the goals of AI adoption

Deloitte’s previous research on AI adoption has shown that organizations where senior leaders articulate a clear vision for AI are 50% more likely to achieve their AI goals.5 A clear AI strategy clarifies whether the primary aim is immediate efficiency gains or long-term value. Also, when leaders clearly communicate that AI is intended to augment, and not replace, human expertise, employees are more likely to engage constructively.6

See it in action: The US Department of the Interior’s 2025 AI Compliance Plan lays out a clear link between strategy and learning. Its strategy identified “transforming mission operations” as the primary goal of AI adoption.7 The Compliance Plan identified that a lack of knowledge about AI definitions and tools was “causing skepticism and confusion” about what AI could really do.8

To address this, the department is planning a tiered and tailored upskilling program. Training will provide foundational fluency for all, higher levels of training for specialists and AI champions, and feature examples tailored to specific roles to make it real.9

2. Start with the ‘familiar-yet-new’ sweet spot

Historical precedents, like electricity, suggest that the largest benefit of AI will not come merely from efficiency gains but from allowing work to be done in entirely new ways. As AI reshapes the workplace, this creates a challenge. If workers learn about AI only in the context of their current tasks, they may not see enough value to justify adoption. However, some of the most valuable future use cases may be either unknown or a step or two removed from existing workflows.

Leaders can address this seeming paradox by finding use cases in the familiar-yet-new sweet spot. Sometimes this means targeting common but time-intensive work tasks within specific roles. Leaders don’t have to do all the work themselves. Peer-to-peer sharing can be particularly effective, enabling enthusiastic early adopters to provide practical examples that resonate with their peers. Incorporating such tailored examples into training can help learners build confidence in AI, improve output quality, and unlock greater AI-driven benefits.

See it in action: To meet diverse workforce needs, the US General Services Administration’s AI Community of Practice partnered with leading higher education institutions to deliver a tailored AI training series. The program included specialized tracks for technical staff, acquisition professionals, and leaders, covering topics such as AI safety, procurement considerations, risk management, governance, and emerging trends.10 About 14,000 participants from 200 government organizations attended, reporting a 92% average satisfaction rating.11

3. Keep training agile and measure success

AI technologies evolve rapidly, so the workforce needs to change just as rapidly. Training programs, therefore, should be easy to update and deploy in different formats, including in-person learning and asynchronous video modules.

Organizations are increasingly designing AI training as an ongoing journey rather than a one-time intervention. Learning pathways can be tailored to common personas and adapted to different AI and gen AI implementation needs, with built-in mechanisms to track outcomes over time. Programs such as Deloitte’s Academy for AI show how tailored learning pathways and outcome tracking can help align workforce development with broader strategic goals. The journey doesn’t end once training is taken. Leaders can track completion and shifts in employees’ attitudes toward AI, ensuring efforts stay aligned with the strategic priorities outlined in step 1. Ultimately, this is not training for training’s sake; it’s about building AI fluency to achieve mission outcomes.

See it in action: The United Kingdom’s 2023 One Big Thing initiative12 focused on data upskilling for public servants, providing online materials and asking all civil servants to complete seven hours of self-directed training between September and December 2023.

An evaluation task force tracked registration, completion rates, and total learning hours. It also surveyed participants’ self-reported data awareness and confidence and analyzed trends in bookings for centrally offered data trainings before and after the program. These metrics revealed both adoption levels and engagement gaps. The findings are now feeding into the design of future One Big Thing initiatives, making subsequent training efforts more targeted.13

By investing in tailored, role-specific training and meeting users where they were, organizations have built AI fluency across the enterprise. Foundational training programs helped employees see the relevance of AI in their work, strengthening their confidence in the technology.

This is just one path to AI fluency, and each organization needs to walk its own. The potential benefits of AI in government—from millions of dollars to billions of hours saved—are significant. Realizing them depends on making the technology relevant to individuals.