Scaling the public sector’s human edge: Making human-AI collaboration work

As AI becomes embedded in government work, agencies are redesigning roles, skills, and workflows so technology amplifies human judgment rather than replaces it

From the wheel to the engine, the quill to the printing press, the postcard to the smartphone, humans have always used tools to accomplish their work.

As artificial intelligence becomes embedded in government work, it is shifting from tool to collaborator. The opportunity is not simply automation, but amplification—using technology to enhance uniquely human strengths such as judgment, creativity, and empathy.

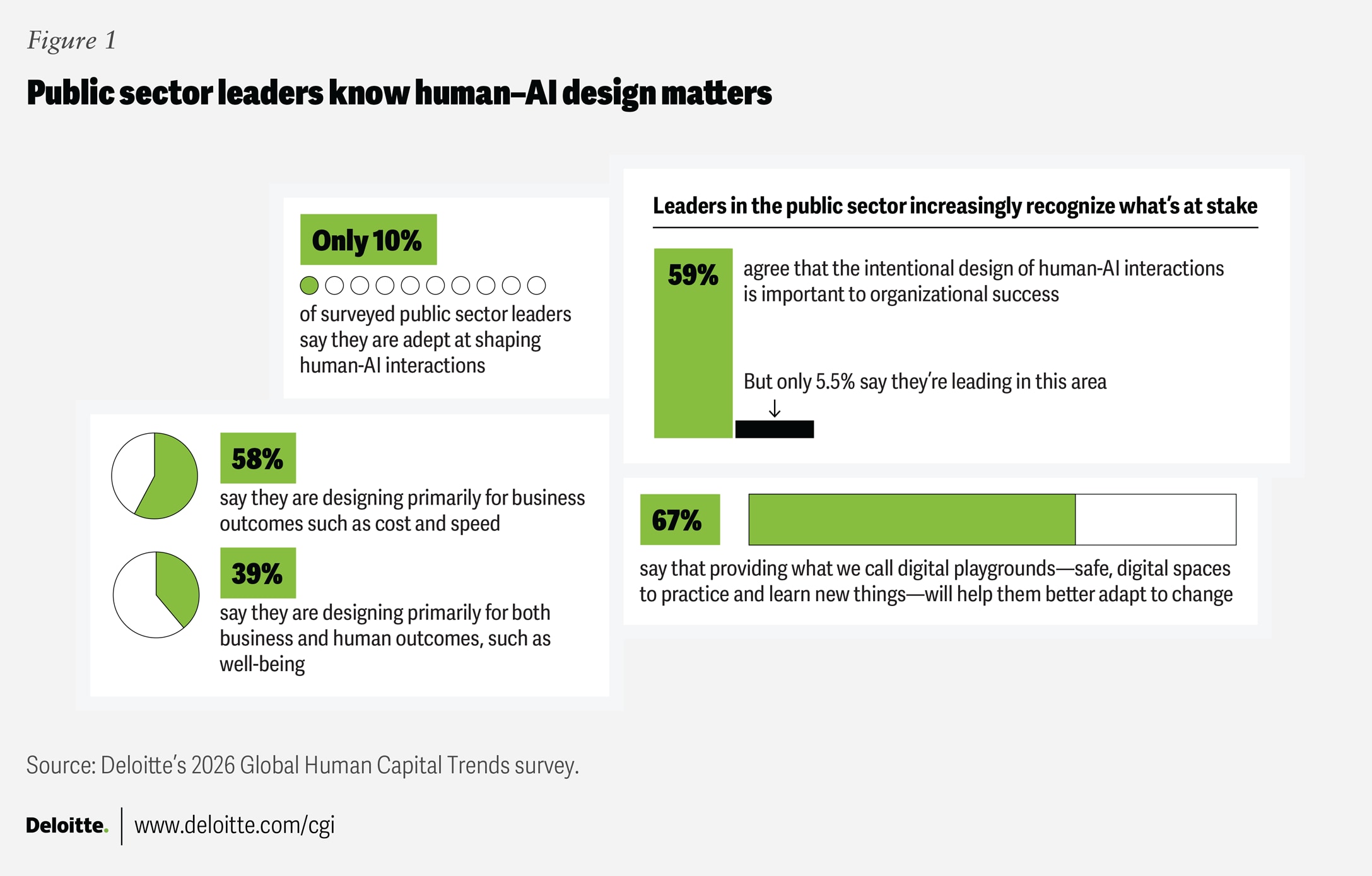

Realizing that potential is not primarily a technology challenge. It is a design challenge. Agencies must rethink roles, workflows, and skills so that humans and AI collaborate productively. Scaling the public sector’s human edge depends on three shifts: designing effective human-machine teaming, building adaptability into the workforce, and developing AI fluency across roles.

Signals: Scaling the public sector’s human edge

Trend in action

Designing human x machine collaboration that works

AI creates the greatest value when organizations intentionally design how humans and machines work together. The difference between additive (humans + machines) and multiplicative (humans × machines) collaboration lies in whether AI merely assists or actively amplifies human capability.

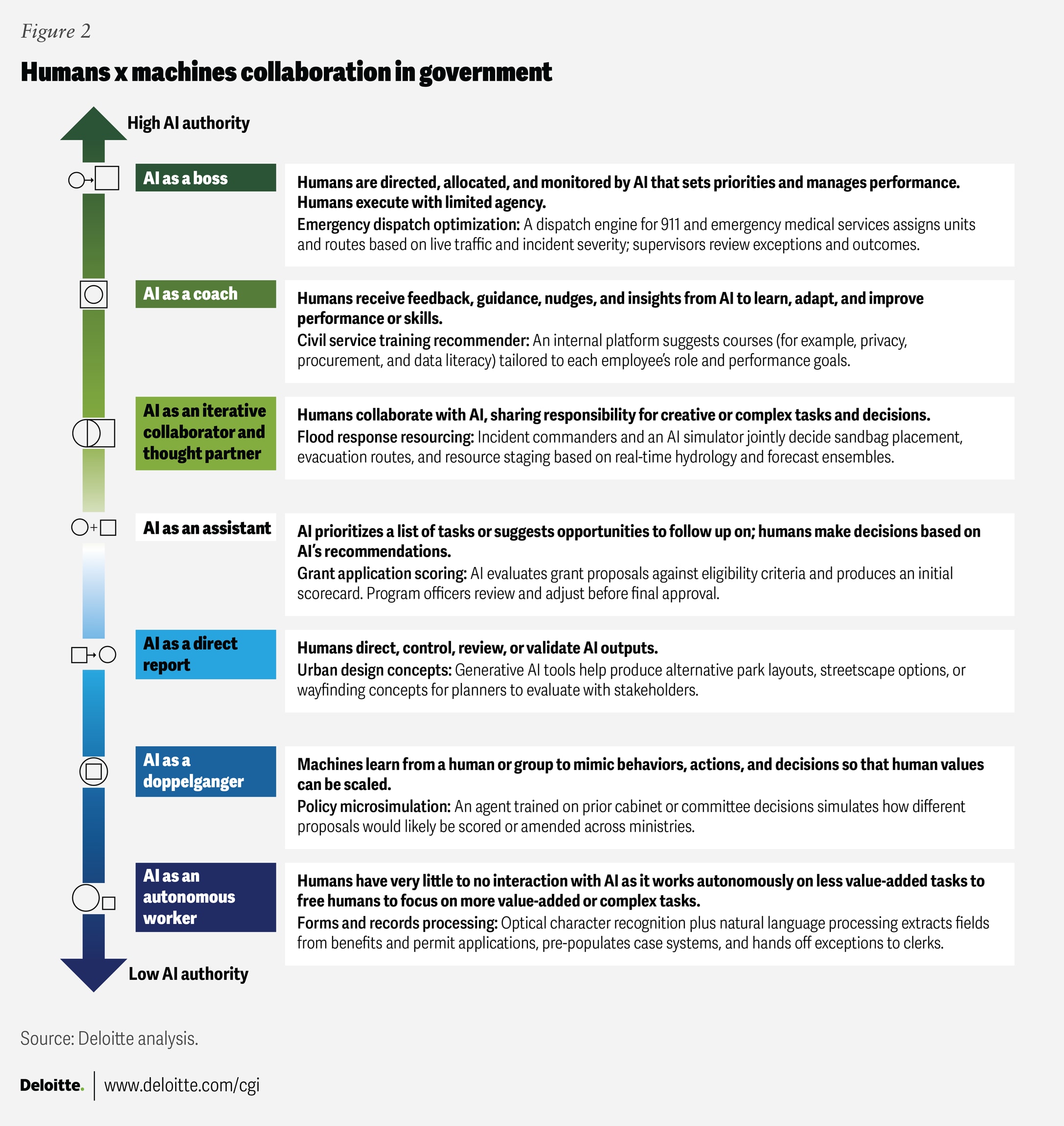

In public sector settings, AI is already augmenting situational awareness in disaster response, supporting frontline decision-making and collaborating in a broad spectrum of other ways, with AI exercising varying degrees of agency (figure 2).

In all cases, humans remain accountable for outcomes—not just “in the loop,” but ultimately responsible.

When designed thoughtfully, these interactions and the human x machine relationship can spark more innovation and efficiency than either could achieve alone. For example, one study found that when humans work closely and iteratively with AI, their performance can improve by up to 29% compared to humans and AI working solo.1

Organizations are exploring different ways to amplify the human edge.

Playing to human and AI strengths to unlock better outcomes

The strongest human-AI partnerships assign work based on comparative advantage. AI excels at pattern recognition, scale, and routine analysis. Humans remain essential for judgment, empathy, and navigating ambiguity.

Designing hybrid workflows requires clarity about this division of labor. Tasks demanding oversight or nuanced decision-making stay with people, while AI handles information processing for speed and precision.

The United Kingdom’s National Health Service illustrates this model. An AI-powered chatbot supports behavioral health triage, with clinicians reviewing high-risk cases flagged by the system. The result: a 30% increase in referral completion, a 23.5% reduction in assessment time, and an 18% decrease in treatment drop-off.2

When thoughtfully structured, human-AI collaboration improves both efficiency and outcomes—without shifting accountability away from people.

Designing for adoption and integration

Access to AI does not guarantee impact. Adoption depends on human-centered design, workflow integration, and continuous change.

Leading organizations embed AI directly into existing systems to reduce context switching and minimize disruption. Buckinghamshire Council in the United Kingdom integrated AI into departmental systems, reducing call wrap time and administrative burden within months.3 Singapore similarly embedded AI tools into everyday government processes, cutting administrative time nearly in half.4

Structured experimentation further accelerates adoption. New Jersey’s AI sandboxes allowed employees to test generative AI safely before deployment, resulting in measurable gains: Self-resolved calls increased by 50%, response times fell by 35%, and more than 80% of users reported that the tools improved their work.5

Ultimately, adoption depends less on technical training than on redesigning work around outcomes. As one private sector leader observed, the real challenge is behavioral change—not simply access to new tools.6

Always-on change management to build resilience and adaptability

AI makes change continuous. Traditional transformation models—such as implement, stabilize, and move on—are no longer sufficient. Agencies need change management that is embedded in daily work: iterative, lightweight, and ongoing.

Change starts at the unit of one, then ripples across the organization

Adaptation begins with the individual. Each caseworker, analyst, or officer must learn to work differently—and those individual shifts compound into organizational change. AI itself can support this transition. Intelligent agents can act as tutors and coaches, offering real-time feedback, suggesting improvements, and helping workers build skills through practice.7

The US Department of Veterans Affairs uses AI-powered simulations to help veterans crisis responders strengthen empathy and intervention skills in realistic scenarios.8 Montgomery County, Maryland, deploys AI as a “practice partner,” prompting teams to rehearse complex situations such as cyber incidents or emergency coordination before they occur. “These response muscles become more important when we talk about the collective, when we talk about teams, and so the ultimate focus might be: How do we make decisions under pressure together?” explains Michael Baskin, the county’s chief information officer.9

These approaches treat adaptation as a continuous capability—not a one-time initiative.

Experimentation is how learning scales safely

In dynamic environments, learning happens through structured experimentation. Agencies are building safe spaces—sandboxes, pilots, and controlled trials—that allow employees to test AI tools with clear guardrails before scaling them broadly.

Singapore’s Government Technology Agency has adopted this learning-by-doing approach through its AI Agents initiative. Public officers experiment with secure AI tools and develop internal bots for tasks such as research, policy review, and responding to public queries. Through hands-on use, they learn how systems behave, where oversight is required, and how to manage risk before broader deployment.10 To date, about 18,000 internal AI bots have been created illustrating how creating a safe space for experimentation can help drive meaningful AI adoption. About 150,000 public officers were reported to be regular users of gen AI.11

By normalizing experimentation, agencies can accelerate adoption while maintaining trust and accountability.

Building AI skills fluency as a core workforce capability

As AI becomes embedded in daily work, fluency becomes essential. AI fluency is not technical mastery alone—it is the ability to understand how systems work, when to rely on them, and when to question them.

Just as physical fitness has different levels of intensity—depending on whether you are a hobby runner, a marathoner, or an Olympic athlete—there are at least three distinct types of AI fluency, based on the learner’s role, experience, and level of interest.

- Use fluency. Applying AI tools safely and effectively in everyday tasks

- Choose fluency. Evaluating tools, risks, and trade-offs to select appropriate solutions

- Build fluency. Designing and developing custom AI applications

The US Department of State’s StateChat program illustrates this tiered approach. Training pathways aligned to use, choose, and build fluency helped employees adopt generative AI responsibly while enabling more advanced users to design custom workflows. Structured learning, paired with governance guardrails, drove adoption while maintaining accountability.

Other governments are adopting similar models. California emphasizes foundational training in privacy, security, and AI bias awareness.12 San Jose pairs role-specific upskilling with certification pathways.13 Singapore’s Digital Academy offers differentiated tracks for leaders, officers, and developers.14 Across cases, the pattern is consistent: baseline literacy for all, deeper capability for many, and advanced expertise for a smaller cohort.

New skills to build—and old ones to double down on

As AI automates more routine analysis, human advantage shifts toward skills that are harder to replicate:

- Discernment

- Framing and problem decomposition

- Social judgment and legitimacy work

- Systems thinking under constraints

Judgment, in particular, becomes central. It develops through practice—in simulations, sandboxes, and scenario-based exercises that allow workers to make decisions in low-risk environments before applying them in high-stakes contexts. Police departments in the United States and Canada are increasingly using virtual reality and simulation tools, some powered with AI, to train officers in de-escalation and crisis intervention.15 These simulations place officers in realistic high-stress scenarios—such as a tense domestic dispute or a mental health crisis—where they can test, assess, and improve their judgment under pressure.

Scaling the human edge requires deliberate cultivation of the skills AI cannot replace.

Enablers and accelerators

Leaders can begin scaling the public sector’s human edge by focusing on a few structural shifts.

- Modernize the workforce system. Align workflow redesign (“hard wiring”) with culture, leadership, and incentives (“soft wiring”) so human-AI collaboration is reinforced across the organization.

- Reengineer roles around outcomes. Redesign job architectures to reflect AI-enabled tasks and clarify accountability for human-machine workflows. Workforce skill sets and modular learning programs should map to roles and functions to address gaps.

- Establish clear design principles. Codify guardrails for collaboration—for example, “AI proposes, humans decide” in high-stakes contexts.

- Define decision rights by risk. Specify when AI can act autonomously, when humans must remain in the loop, and when decisions must remain human-only.

- Create space for responsible experimentation. Use sandboxes and pilots to test new tools safely, share lessons learned, and avoid common antipatterns such as siloed deployments or one-time training initiatives.

Providing access to AI tools alone will not deliver results. Productivity gains emerge when work is redesigned, skills are developed, and governance keeps pace with capability.

Toward 2030: The future this trend could unlock

Human-agent teams become the norm. Public servants act as orchestrators of intelligence, directing networks of AI agents while retaining responsibility for intent, oversight, and outcomes.

AI is designed for cognitive ergonomics. Systems are built to support human reasoning—offering transparency, configurable risk thresholds, bias checks, and clear audit trails by default.

Work compresses around insight. Routine processing is automated at the point of data creation, allowing human roles to concentrate on interpretation, exception handling, and community engagement.

Professional identity evolves. Public servants develop hybrid expertise that blends domain knowledge with AI fluency and ethical discernment.

Judgment becomes a core institutional asset. Agencies invest deliberately in building decision-making capability through simulation, structured practice, and scenario-based learning.

Legitimacy becomes a design priority. AI-enabled actions are paired with explainability and visible human accountability, ensuring public trust keeps pace with technological capability.